1. Introduction to Linear Transformations

A linear transformation is a mathematical function that maps vectors from one vector space to another while preserving the structure of vector addition and scalar multiplication. Linear transformations are fundamental concepts in linear algebra and play an important role in mathematics, physics, computer science, and engineering.

In simple terms, a linear transformation changes vectors in a predictable and structured way. It may stretch, shrink, rotate, reflect, or shear vectors, but it does so while maintaining the linear relationships between them.

Linear transformations help us understand how mathematical systems behave under transformations. They are used to model physical processes, manipulate geometric objects, analyze data, and solve complex mathematical problems.

In mathematics, linear transformations are usually represented using matrices. Matrix multiplication provides a convenient way to compute how vectors change under transformations.

For example, if a transformation T acts on a vector v, we write:

T(v)

or

Av

where A is the transformation matrix.

Linear transformations are essential in many modern technologies, including computer graphics, robotics, machine learning, signal processing, and control systems.

2. Definition of Linear Transformation

A linear transformation is a function between vector spaces that satisfies two important properties:

- Additivity (preservation of addition)

- Homogeneity (preservation of scalar multiplication)

Mathematically:

T(u + v) = T(u) + T(v)

T(cu) = cT(u)

Where:

- T is the transformation

- u and v are vectors

- c is a scalar

If both conditions are satisfied, the transformation is linear.

3. Vector Spaces and Linear Transformations

Linear transformations operate between vector spaces.

Example:

T : V → W

Where:

V = domain vector space

W = codomain vector space

This means the transformation maps vectors from space V to space W.

Example:

T(x, y) = (2x, 3y)

This transformation scales vectors.

4. Matrix Representation of Linear Transformations

Every linear transformation between finite-dimensional vector spaces can be represented using a matrix.

If vector:

x = (x₁, x₂)

and matrix:

A =

[ a₁₁ a₁₂ ]

[ a₂₁ a₂₂ ]

Then transformation is:

T(x) = Ax

Matrix multiplication produces the transformed vector.

Example:

A =

[2 0]

[0 3]

Vector:

v = (1,2)

Result:

T(v) = (2,6)

This transformation stretches vectors differently along axes.

5. Geometric Interpretation

Linear transformations can change vectors in different ways:

- Scaling

- Rotation

- Reflection

- Shearing

However, they preserve the origin and straight lines.

Important properties:

- Lines remain lines

- Parallel lines remain parallel

- Origin stays fixed

6. Types of Linear Transformations

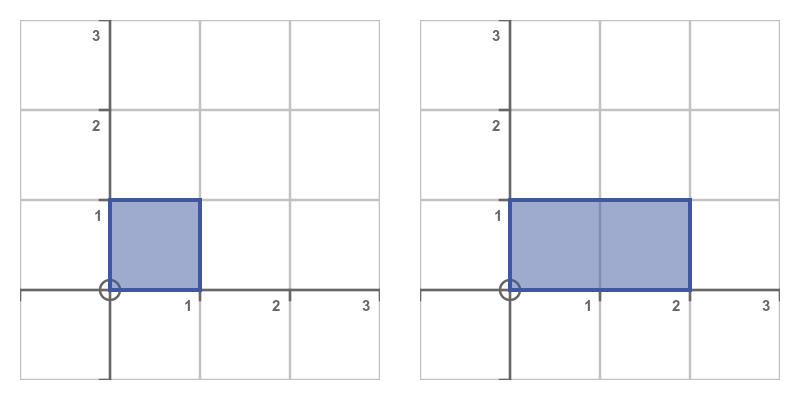

Scaling Transformation

Scaling changes the size of vectors.

Example:

T(x,y) = (2x,3y)

Matrix:

[2 0]

[0 3]

Vectors stretch differently in each direction.

Rotation Transformation

Rotation rotates vectors around the origin.

Matrix:

[ cosθ −sinθ ]

[ sinθ cosθ ]

Example:

Rotation by 90°.

Reflection Transformation

Reflection flips vectors across a line.

Reflection across x-axis:

[1 0]

[0 −1]

Reflection across y-axis:

[-1 0]

[0 1]

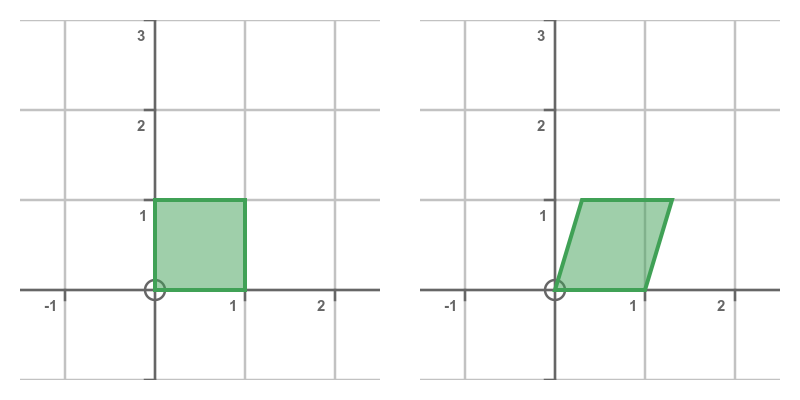

Shear Transformation

Shear transformation slants shapes.

Matrix:

[1 k]

[0 1]

Used in computer graphics.

7. Identity Transformation

The identity transformation leaves vectors unchanged.

Matrix:

[1 0]

[0 1]

T(v) = v

8. Zero Transformation

The zero transformation maps every vector to zero.

T(v) = 0

Matrix:

[0 0]

[0 0]

9. Composition of Transformations

Two transformations can be combined.

If:

T₁(v) = A v

T₂(v) = B v

Then composition:

T₂(T₁(v)) = BA v

This corresponds to matrix multiplication.

10. Kernel of a Linear Transformation

The kernel of a transformation is the set of vectors mapped to zero.

Kernel:

Ker(T) = {v | T(v) = 0}

It measures how much information is lost during transformation.

11. Image of a Transformation

The image (or range) is the set of all outputs.

Image:

Im(T) = {T(v)}

This represents all possible transformed vectors.

12. Rank and Nullity

Two important concepts are:

Rank = dimension of image

Nullity = dimension of kernel

These are related by:

Rank + Nullity = dimension of vector space

This is called the Rank–Nullity Theorem.

13. Invertible Transformations

A transformation is invertible if there exists another transformation that reverses it.

Example:

If

T(v) = Av

Then inverse:

T⁻¹(v) = A⁻¹v

Condition:

det(A) ≠ 0

14. Eigenvalues and Linear Transformations

Eigenvalues describe how a transformation scales vectors.

Equation:

Av = λv

Where:

λ = eigenvalue

v = eigenvector

Eigenvectors remain in the same direction after transformation.

15. Diagonalization

Some transformations can be simplified using diagonal matrices.

A = PDP⁻¹

Where:

D contains eigenvalues.

This simplifies repeated transformations.

16. Linear Transformations in Geometry

In geometry, linear transformations manipulate shapes.

Examples:

- Rotating objects

- Scaling images

- Reflecting shapes

Used extensively in computer graphics.

17. Linear Transformations in Physics

Physics uses transformations to describe physical systems.

Examples include:

- rotation of coordinate systems

- Lorentz transformations in relativity

- quantum operators

These transformations help describe physical laws.

18. Applications in Computer Graphics

Linear transformations are essential in:

- 3D modeling

- video games

- animation

- virtual reality

Transformations allow objects to move, rotate, and scale.

19. Applications in Machine Learning

In machine learning, transformations are used to:

- project data into new spaces

- reduce dimensions

- analyze features

Algorithms such as PCA rely on linear transformations.

20. Applications in Signal Processing

Signals can be represented as vectors.

Transformations help analyze signals using:

- Fourier transforms

- wavelet transforms

21. Linear Transformations in Robotics

Robots rely on transformations to calculate:

- arm movements

- rotations

- coordinate conversions

Matrices help describe robot motion.

22. Importance of Linear Transformations

Linear transformations are powerful tools for understanding how vector spaces change under mathematical operations.

They allow mathematicians and scientists to model real-world processes efficiently.

They help simplify complex systems, analyze data, and perform geometric manipulations.

Conclusion

Linear transformations are fundamental operations in linear algebra that describe how vectors move or change within vector spaces. By preserving vector addition and scalar multiplication, these transformations maintain the underlying structure of vector spaces while allowing changes such as scaling, rotation, reflection, and shear.

Representing linear transformations using matrices makes calculations efficient and allows them to be applied to complex systems. Linear transformations form the foundation for many important mathematical concepts including eigenvalues, eigenvectors, and matrix diagonalization.

Their applications extend across many fields such as computer graphics, physics, engineering, robotics, machine learning, and signal processing. In modern technology, linear transformations are essential tools for manipulating data, modeling systems, and solving scientific problems.

Understanding linear transformations is therefore crucial for anyone studying advanced mathematics, data science, engineering, or computer science.