Introduction

Entropy and microstates are fundamental concepts in thermodynamics and statistical mechanics that describe the microscopic behavior of physical systems and how this behavior determines macroscopic properties such as temperature, pressure, and energy.

Entropy is often described as a measure of disorder, randomness, or uncertainty within a physical system. However, in modern physics, entropy has a more precise meaning: it quantifies the number of possible microscopic configurations (microstates) that correspond to a particular macroscopic state of a system.

The concept of entropy connects thermodynamics with statistical mechanics. Thermodynamics deals with macroscopic quantities such as temperature and pressure, while statistical mechanics explains these properties by analyzing the microscopic motion of particles such as atoms and molecules.

The relationship between entropy and microstates was first expressed by the Austrian physicist Ludwig Boltzmann, whose famous equation is:

[

S = k \ln \Omega

]

Where:

- (S) = entropy

- (k) = Boltzmann constant

- (\Omega) = number of microstates

This equation shows that entropy increases as the number of possible microscopic arrangements increases.

Entropy plays an essential role in many areas of physics and science, including:

- Thermodynamics

- Statistical mechanics

- Information theory

- Cosmology

- Chemical thermodynamics

Understanding entropy and microstates provides deep insight into the behavior of physical systems and the fundamental laws governing energy and matter.

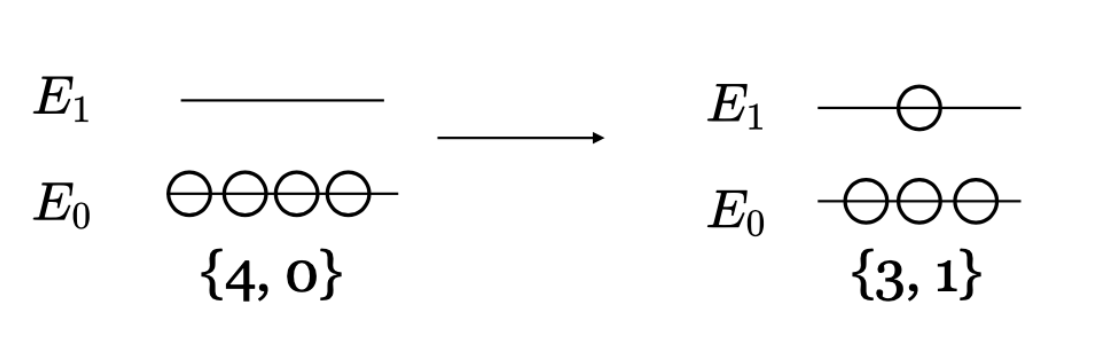

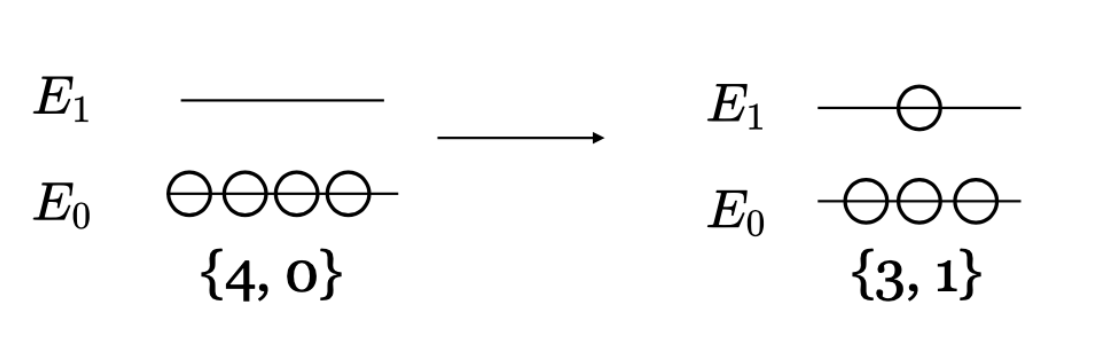

Macrostates and Microstates

To understand entropy, it is necessary to distinguish between macrostates and microstates.

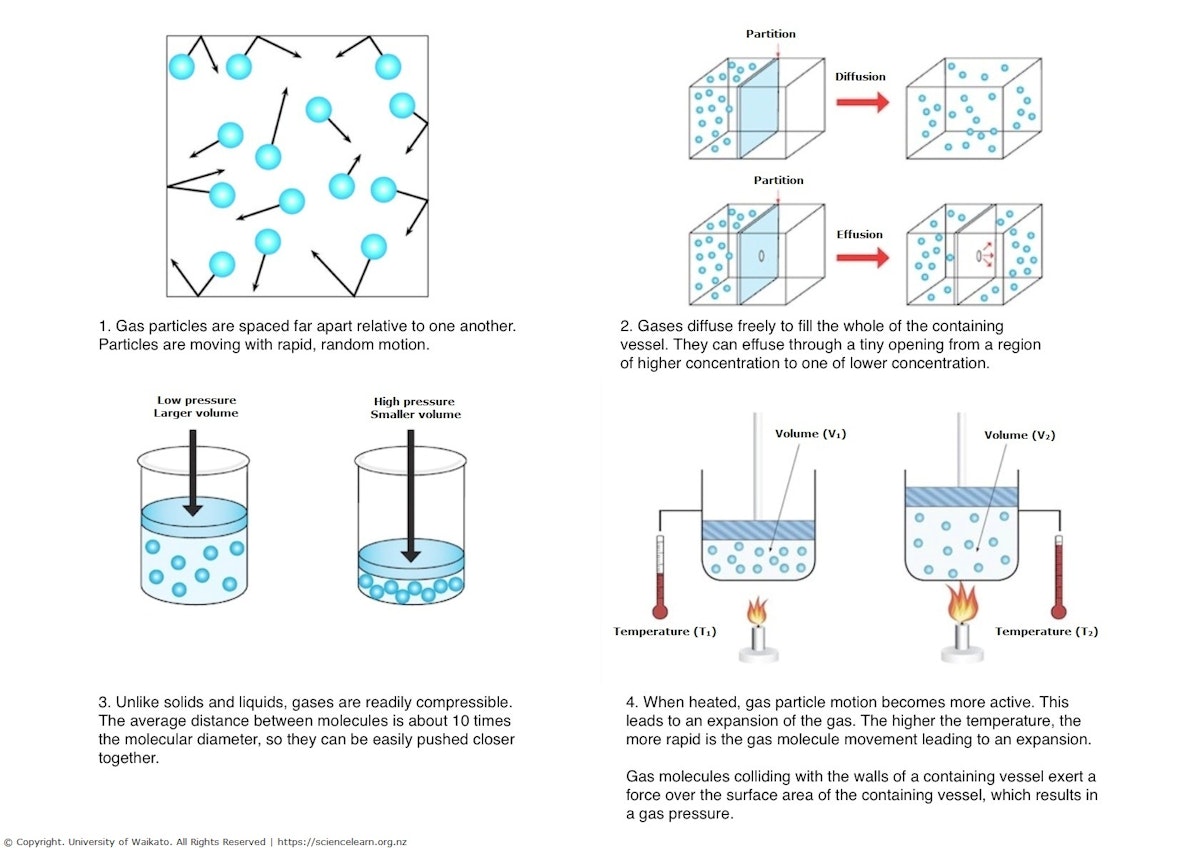

Macrostate

A macrostate describes the overall properties of a system using macroscopic variables such as:

- Temperature

- Pressure

- Volume

- Energy

Example:

A container of gas at a certain temperature and pressure represents a macrostate.

Microstate

A microstate describes the exact configuration of all particles in the system, including:

- Positions of particles

- Velocities of particles

- Energy distribution

Many different microstates can correspond to the same macrostate.

The number of possible microstates determines the entropy of the system.

Boltzmann’s Entropy Formula

The relationship between entropy and microstates is expressed by Boltzmann’s equation:

[

S = k \ln \Omega

]

Where:

- (S) = entropy

- (k) = Boltzmann constant (1.38 \times 10^{-23} , J/K)

- (\Omega) = number of microstates

This equation shows that entropy depends on the number of microscopic configurations available to the system.

Important implications include:

- Systems naturally evolve toward states with higher numbers of microstates.

- Higher entropy corresponds to greater disorder.

Boltzmann’s equation is one of the most important formulas in statistical physics.

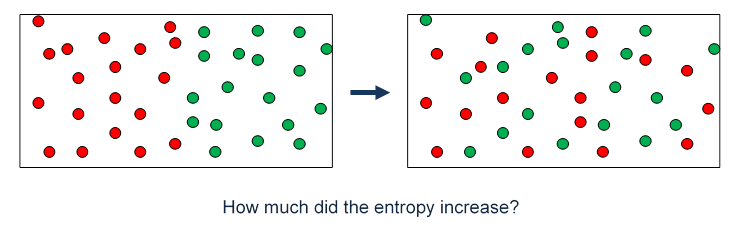

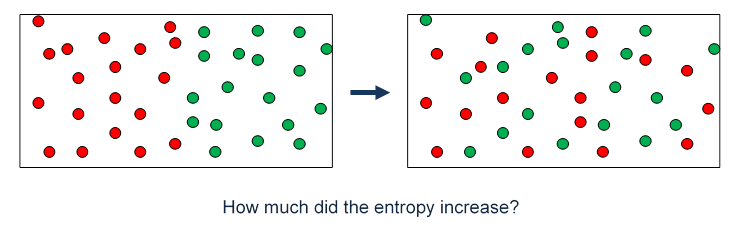

Entropy and Probability

Entropy is closely related to probability.

If a system has many microstates corresponding to a particular macrostate, that macrostate is more probable.

For example:

- A gas evenly distributed in a container has many microstates.

- A gas confined to one corner has far fewer microstates.

Therefore, the evenly distributed state is far more likely.

This explains why systems naturally evolve toward states of maximum entropy.

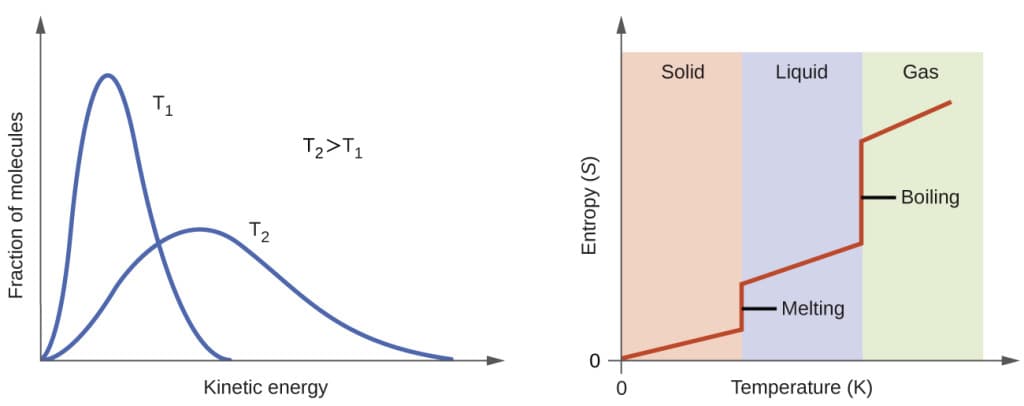

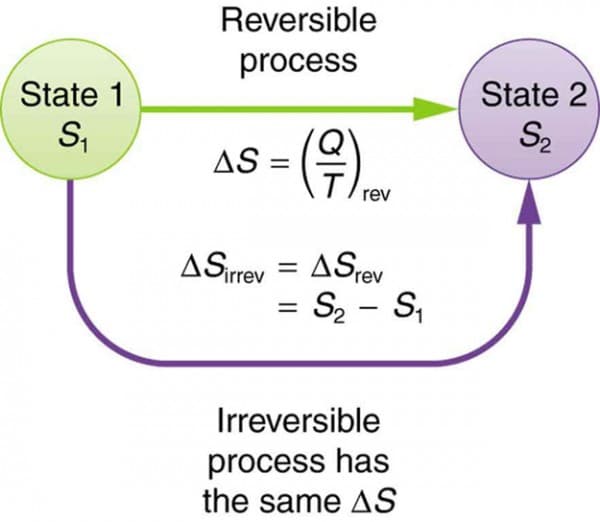

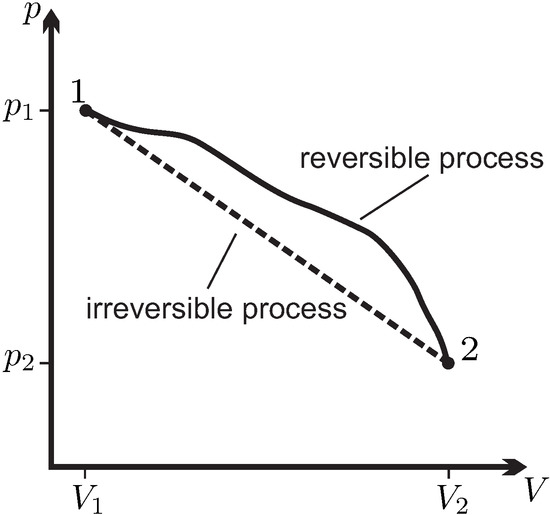

Entropy in Thermodynamics

In thermodynamics, entropy is defined as a measure of energy dispersal.

The change in entropy is given by:

[

dS = \frac{\delta Q}{T}

]

Where:

- (dS) = change in entropy

- (\delta Q) = heat added

- (T) = temperature

This equation applies to reversible processes.

The second law of thermodynamics states:

The total entropy of an isolated system always increases or remains constant.

This law explains why certain processes occur naturally.

Entropy and Disorder

Entropy is often interpreted as a measure of disorder.

Examples include:

Solid to Liquid

When ice melts into water, molecules become more disordered.

Liquid to Gas

When water evaporates, molecules spread out even more.

Gas Expansion

When a gas expands into a larger volume, entropy increases.

In each case, the number of microstates increases.

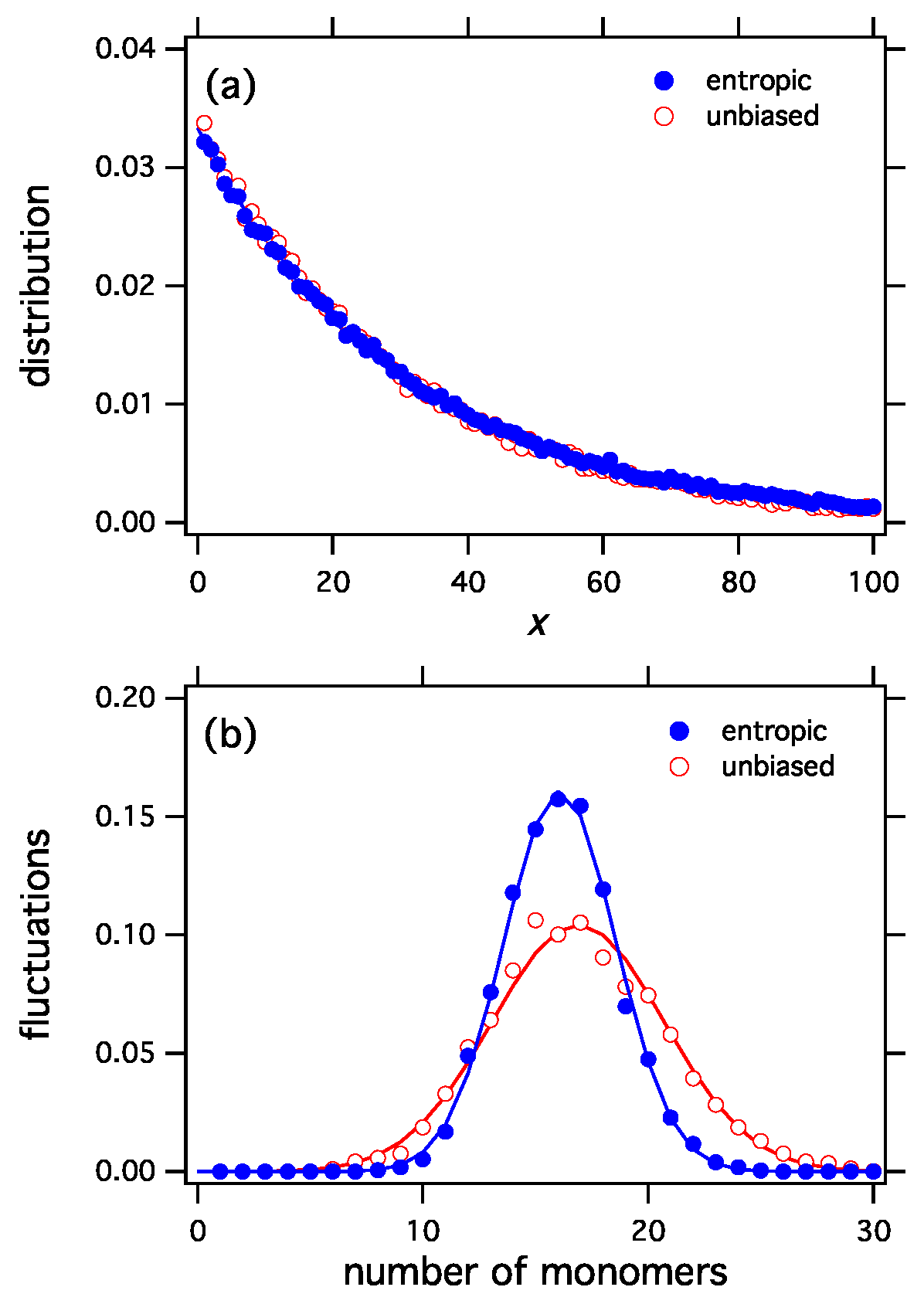

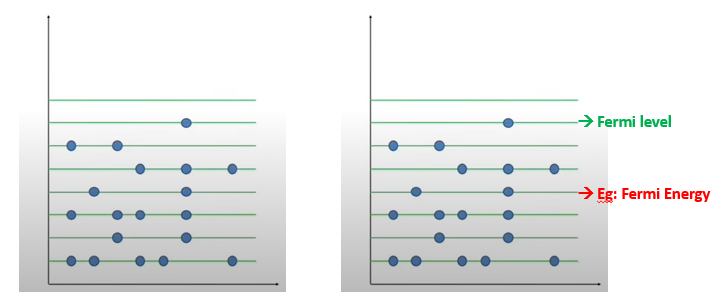

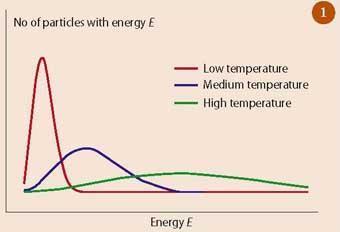

Entropy in Statistical Mechanics

Statistical mechanics provides a microscopic explanation of entropy.

In this framework:

- Systems consist of many particles.

- Each particle can occupy different energy states.

The probability of a system being in a particular state is given by the Boltzmann distribution.

Statistical mechanics connects microscopic particle behavior with macroscopic thermodynamic properties.

Entropy and the Arrow of Time

Entropy is closely related to the arrow of time.

Most physical processes are irreversible because entropy increases.

Examples include:

- A broken glass cannot spontaneously reassemble.

- Heat flows from hot objects to cold objects.

- Gases mix spontaneously but do not unmix.

These processes move toward states with higher entropy.

This explains why time appears to move in one direction.

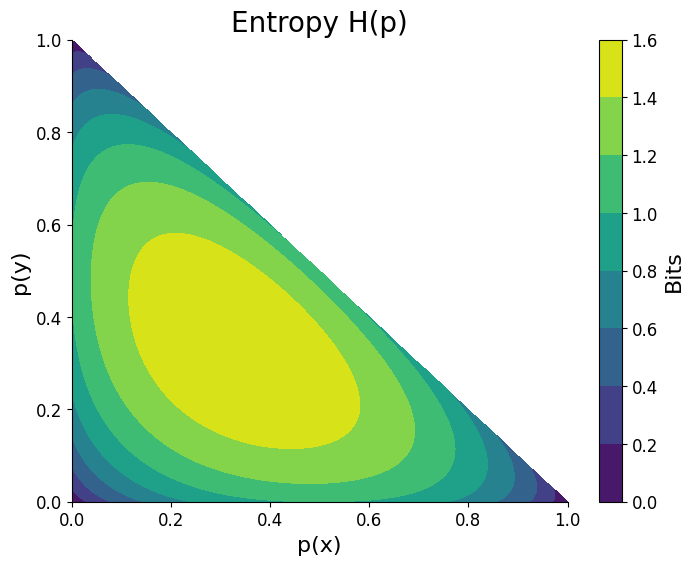

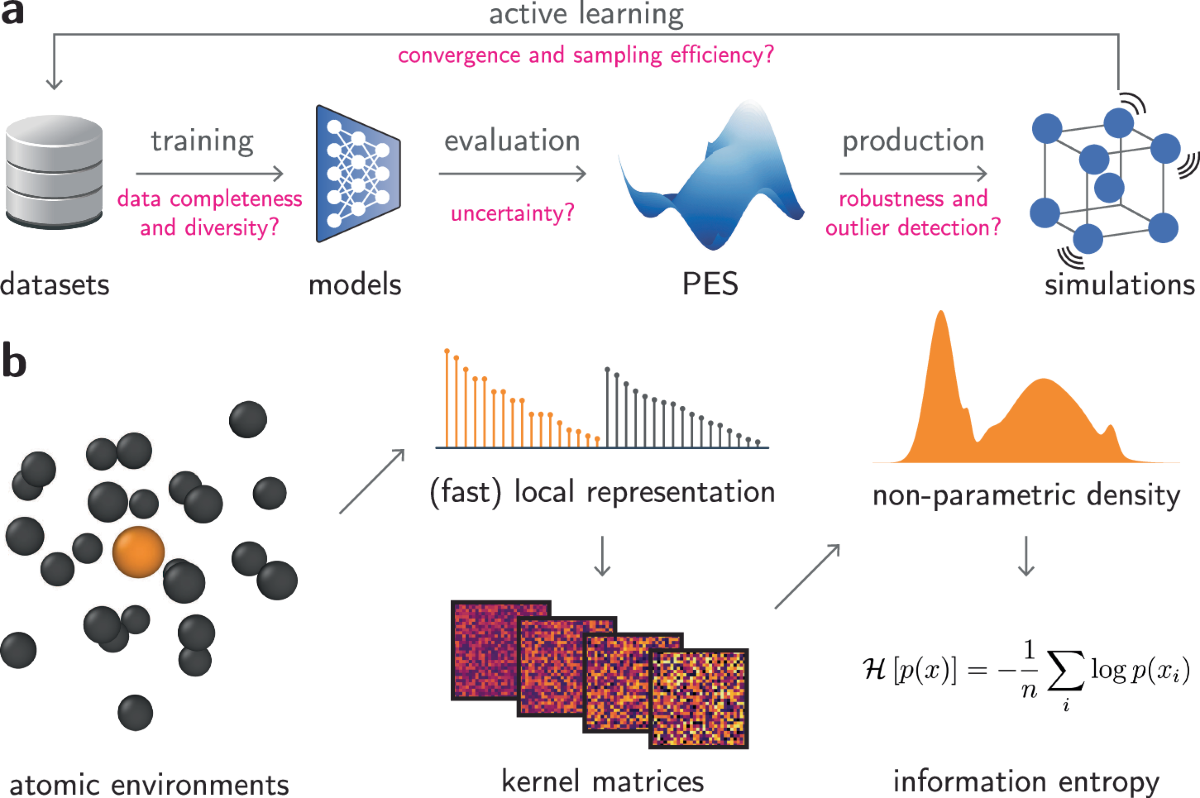

Entropy in Information Theory

Entropy also appears in information theory.

In this context, entropy measures the amount of uncertainty or information in a system.

The information entropy formula is:

[

H = – \sum p_i \log p_i

]

Where:

- (p_i) = probability of each state.

Information entropy is widely used in:

- Data compression

- Communication systems

- Machine learning

Applications of Entropy and Microstates

Entropy and microstates play important roles in many scientific fields.

Chemistry

Predicting reaction spontaneity.

Physics

Understanding phase transitions and thermal equilibrium.

Cosmology

Studying the thermodynamic evolution of the universe.

Engineering

Analyzing efficiency of engines and energy systems.

Entropy is a universal concept that appears across many disciplines.

Importance in Modern Physics

Entropy and microstates provide a deep understanding of physical systems.

They connect microscopic particle behavior with macroscopic thermodynamic laws.

Entropy also explains why natural processes evolve toward equilibrium.

This concept plays a central role in statistical mechanics, thermodynamics, and information theory.

Conclusion

Entropy and microstates are fundamental concepts that explain how microscopic particle arrangements determine macroscopic thermodynamic properties. A macrostate describes the overall state of a system, while microstates represent the many possible microscopic configurations that correspond to that macrostate.

The famous Boltzmann equation shows that entropy increases with the number of possible microstates, explaining why systems naturally evolve toward states of higher probability and disorder. Entropy also provides insight into irreversible processes, the arrow of time, and the behavior of complex systems.

From thermodynamics and statistical mechanics to information theory and cosmology, entropy remains one of the most profound and universal concepts in physics.