Introduction to Bayes’ Theorem

Bayes’ Theorem is one of the most important principles in probability theory and statistics. It provides a mathematical rule for updating probabilities when new information becomes available. In simple terms, Bayes’ theorem allows us to revise our beliefs or predictions based on additional evidence.

The theorem is named after Thomas Bayes, an eighteenth-century mathematician and theologian who first introduced the concept. Later, mathematician Pierre-Simon Laplace expanded and formalized the theory, making it a key component of modern statistical inference.

In many real-world situations, probabilities are not fixed. Instead, they change when new data or evidence becomes available. For example:

- Doctors update the probability of a disease after seeing medical test results.

- Email systems update the probability that a message is spam after analyzing its content.

- Weather forecasting models update probabilities when new atmospheric data arrives.

Bayes’ theorem provides the mathematical framework that allows such updates.

This theorem forms the foundation of Bayesian statistics, a branch of statistics that focuses on updating probabilities using evidence. It is widely used in machine learning, artificial intelligence, medical diagnosis, economics, data science, and decision theory.

Understanding Bayes’ theorem helps students and researchers analyze uncertain situations more effectively and develop predictive models.

Basic Concepts Required for Bayes’ Theorem

To understand Bayes’ theorem, it is necessary to review some basic probability concepts.

Random Experiment

A random experiment is an experiment whose outcome cannot be predicted with certainty. Examples include tossing a coin, rolling a die, or drawing a card from a deck.

Sample Space

The sample space is the set of all possible outcomes of a random experiment.

Example:

When tossing a coin:

S = {Head, Tail}

When rolling a die:

S = {1, 2, 3, 4, 5, 6}

Event

An event is a subset of the sample space.

Example:

Event A = obtaining an even number when rolling a die.

A = {2, 4, 6}

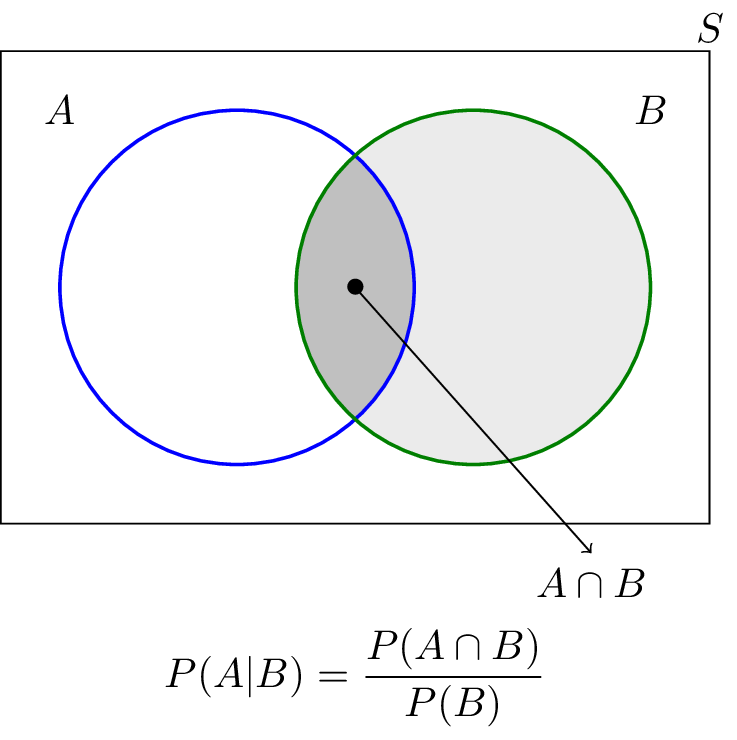

Conditional Probability

Conditional probability measures the probability of an event given that another event has already occurred.

The formula is:

P(A | B) = P(A ∩ B) / P(B)

This concept forms the basis for Bayes’ theorem.

Statement of Bayes’ Theorem

Bayes’ theorem provides a formula that relates conditional probabilities.

The mathematical expression of Bayes’ theorem is:

P(A | B) = [P(B | A) × P(A)] / P(B)

Where:

- P(A) = prior probability of event A

- P(B | A) = probability of event B given A (likelihood)

- P(B) = probability of event B

- P(A | B) = posterior probability of A after observing B

In simpler terms, Bayes’ theorem calculates the probability of an event based on new evidence.

It allows us to update the initial belief (prior probability) using observed data.

Components of Bayes’ Theorem

Bayes’ theorem consists of four main components.

Prior Probability

The prior probability represents the initial belief about an event before new information is considered.

Example:

The probability that a randomly selected person has a disease.

Likelihood

Likelihood is the probability of observing evidence given that the event is true.

Example:

The probability that a medical test is positive when a person actually has the disease.

Evidence

Evidence is the probability of the observed data.

Example:

The probability that a medical test result is positive regardless of whether the person has the disease.

Posterior Probability

Posterior probability is the updated probability of the event after considering the evidence.

Example:

The probability that a person has a disease given that the test result is positive.

Bayes’ theorem connects these four components mathematically.

Understanding Bayes’ Theorem with Example

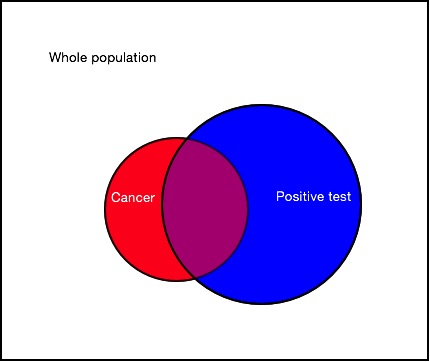

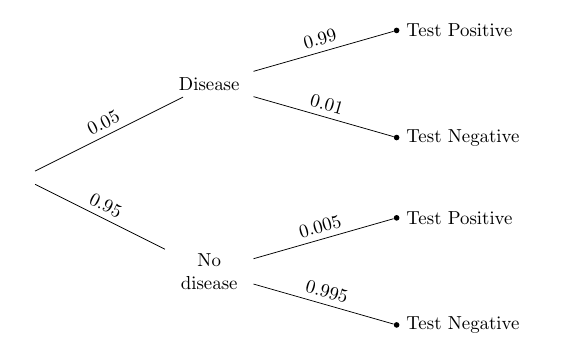

Consider a medical test for a disease.

Suppose:

- 1% of people have the disease.

- The test correctly detects the disease 99% of the time.

- The test incorrectly shows positive 5% of the time for healthy individuals.

Let:

A = person has the disease

B = test result is positive

We want to find:

P(A | B)

Using Bayes’ theorem:

P(A | B) = [P(B | A) × P(A)] / P(B)

Substituting values:

P(A) = 0.01

P(B | A) = 0.99

We also calculate P(B):

P(B) = P(B | A)P(A) + P(B | A’)P(A’)

By solving this expression, we obtain the probability that a person actually has the disease after receiving a positive test result.

This example demonstrates how Bayes’ theorem helps update probabilities using new information.

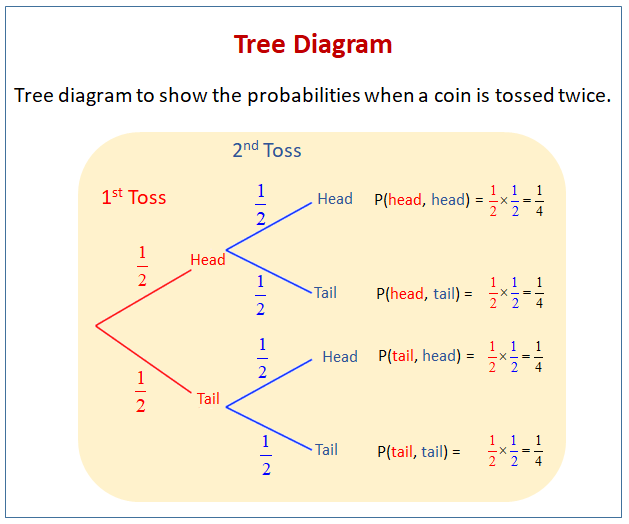

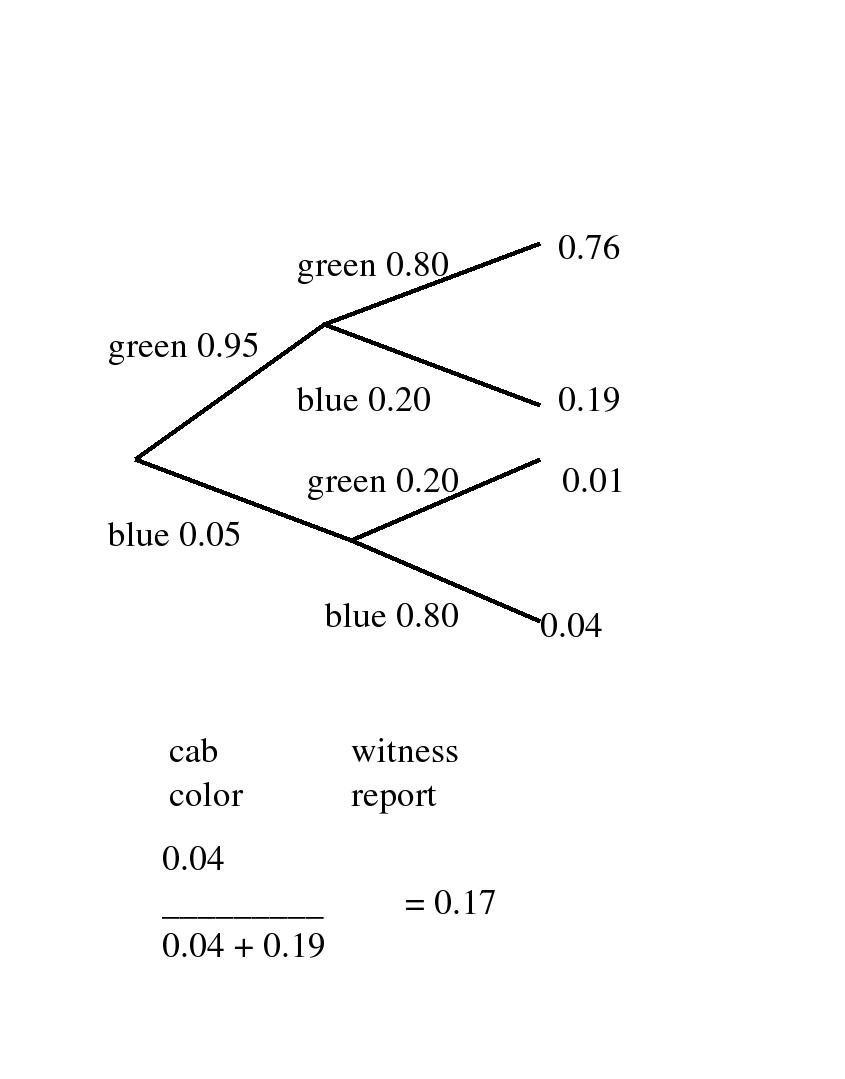

Bayes’ Theorem Using Tree Diagrams

Tree diagrams provide a visual representation of probability events.

In a tree diagram:

- Each branch represents an outcome.

- Probabilities are assigned to each branch.

- Joint probabilities are calculated by multiplying probabilities along branches.

Bayes’ theorem can be applied by examining the relevant branches of the tree diagram.

This graphical approach helps simplify complex probability problems.

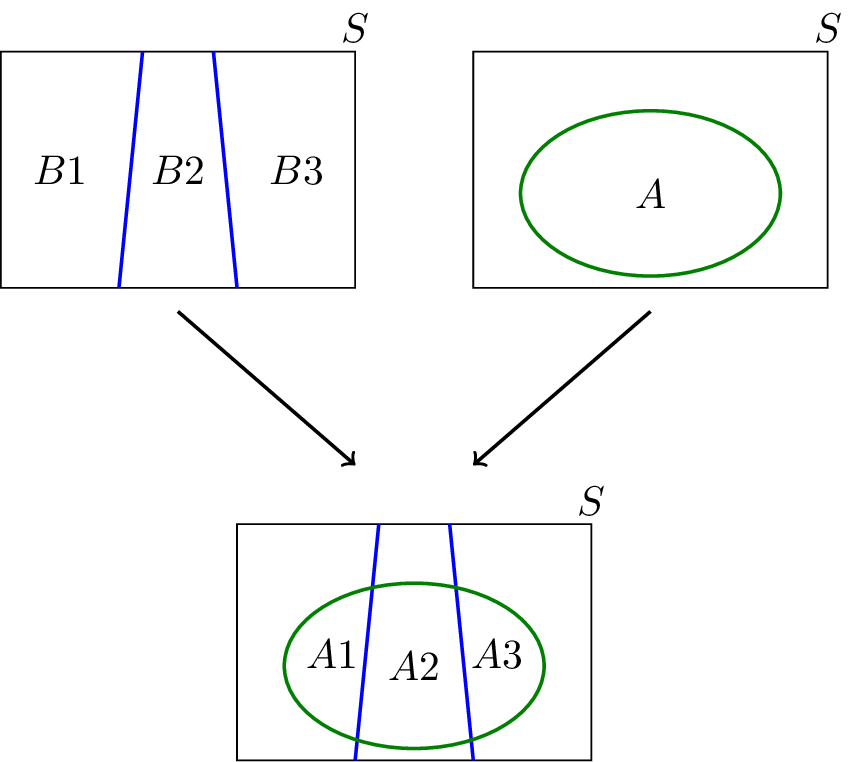

Bayes’ Theorem and the Law of Total Probability

Bayes’ theorem is closely related to the law of total probability.

Suppose events B₁, B₂, …, Bₙ form a partition of the sample space.

Then:

P(A) = P(A | B₁)P(B₁) + P(A | B₂)P(B₂) + … + P(A | Bₙ)P(Bₙ)

Using this rule, Bayes’ theorem can be written as:

P(Bᵢ | A) = [P(A | Bᵢ) P(Bᵢ)] / Σ [P(A | Bⱼ) P(Bⱼ)]

This extended form is often used in statistical modeling.

Applications of Bayes’ Theorem

Bayes’ theorem has numerous applications across different fields.

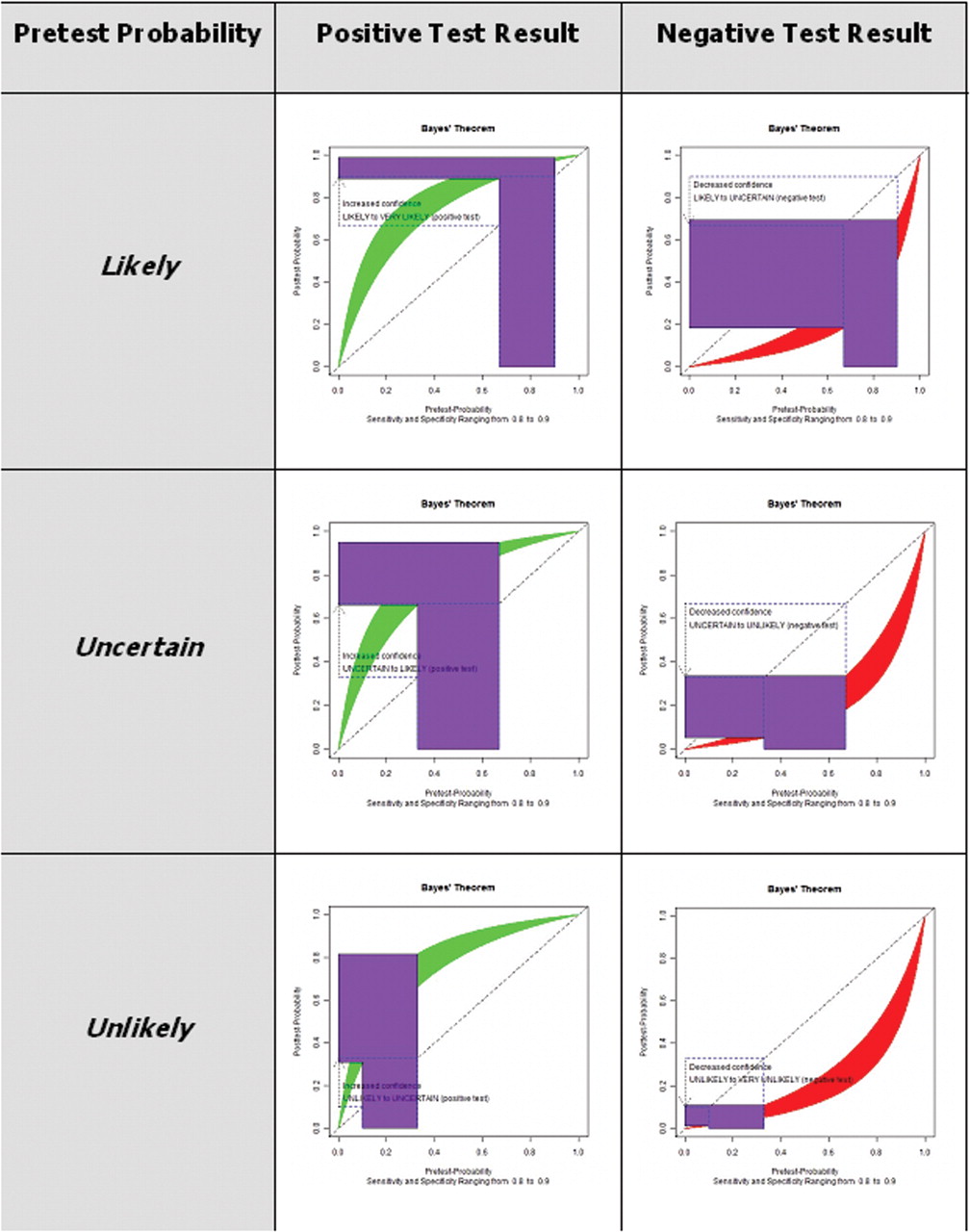

Medical Diagnosis

Doctors use Bayesian analysis to determine the probability of diseases based on test results.

Spam Email Filtering

Email systems classify messages as spam or legitimate using Bayesian probability models.

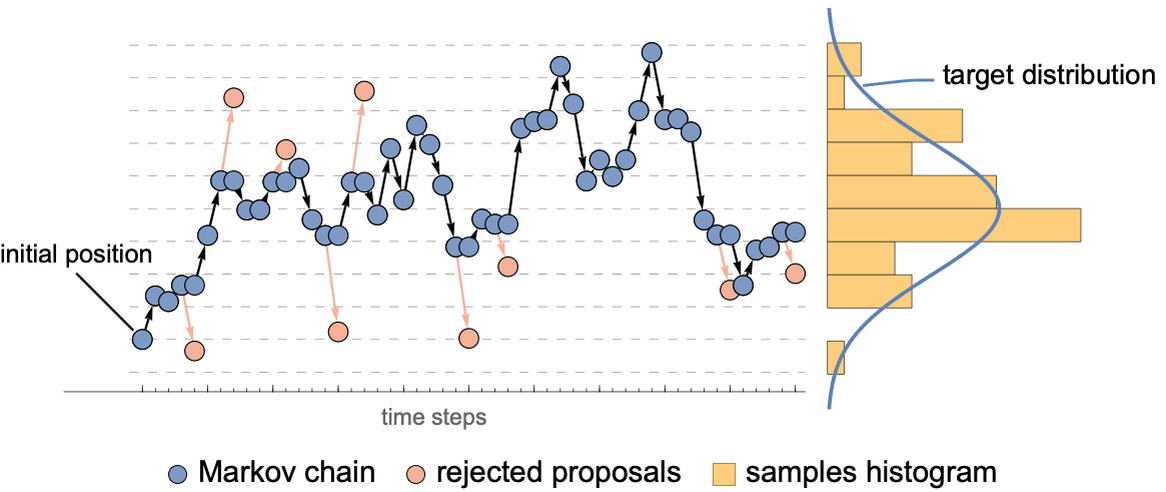

Machine Learning

Many machine learning algorithms use Bayesian inference for prediction.

Risk Analysis

Financial institutions analyze risks using Bayesian models.

Weather Forecasting

Meteorologists update weather predictions using new atmospheric data.

These applications highlight the practical importance of Bayes’ theorem.

Importance of Bayes’ Theorem

Bayes’ theorem is fundamental in probability and statistics because it allows probabilities to be updated when new evidence is available.

It provides a systematic way to combine prior knowledge with observed data.

The theorem is particularly important in fields that require decision-making under uncertainty.

Modern data science and artificial intelligence rely heavily on Bayesian methods for predictive modeling and statistical inference.

Understanding Bayes’ theorem helps researchers analyze complex systems and interpret uncertain information effectively.

Conclusion

Bayes’ theorem is a powerful mathematical tool used to update probabilities based on new evidence. It connects prior probabilities, likelihoods, and posterior probabilities to provide a comprehensive framework for analyzing uncertain events.

The theorem plays a critical role in probability theory, statistics, machine learning, medicine, economics, and many other fields. By allowing probabilities to be revised when new data becomes available, Bayes’ theorem helps researchers make better predictions and decisions.

Understanding Bayes’ theorem not only strengthens knowledge of probability theory but also provides valuable insights into how information influences decision-making in uncertain environments.