Introduction

Entropy is a fundamental concept in thermodynamics, physics, chemistry, and information theory. It describes the degree of disorder, randomness, or energy dispersal within a system. The concept of entropy plays a central role in understanding natural processes, chemical reactions, and the direction in which physical systems evolve over time.

In simple terms, entropy measures how spread out or disorganized the energy in a system is. Systems naturally evolve toward states with greater disorder and higher entropy. This principle explains many everyday phenomena, such as why ice melts, gases expand, and heat flows from hot objects to cold objects.

Entropy was first introduced in the 19th century by the German physicist Rudolf Clausius while studying heat engines and thermodynamic processes. Later, scientists such as Ludwig Boltzmann connected entropy with molecular motion and probability, giving the concept a deeper statistical interpretation.

Entropy is closely related to the Second Law of Thermodynamics, which states that the total entropy of an isolated system always increases over time. This law explains why certain processes occur spontaneously while others do not.

Entropy has become one of the most important ideas in modern science. It helps scientists understand processes ranging from molecular reactions and phase transitions to cosmology and information processing.

1. Definition of Entropy

Entropy is a thermodynamic property that measures the degree of disorder or randomness in a system.

In thermodynamics, entropy is symbolized by S.

Entropy can also be described as the measure of energy dispersal within a system.

For example:

- A perfectly ordered crystal has very low entropy.

- A gas with freely moving molecules has high entropy.

As systems become more disordered, their entropy increases.

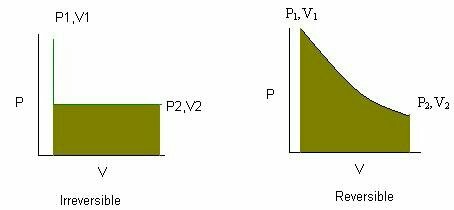

2. Mathematical Expression of Entropy

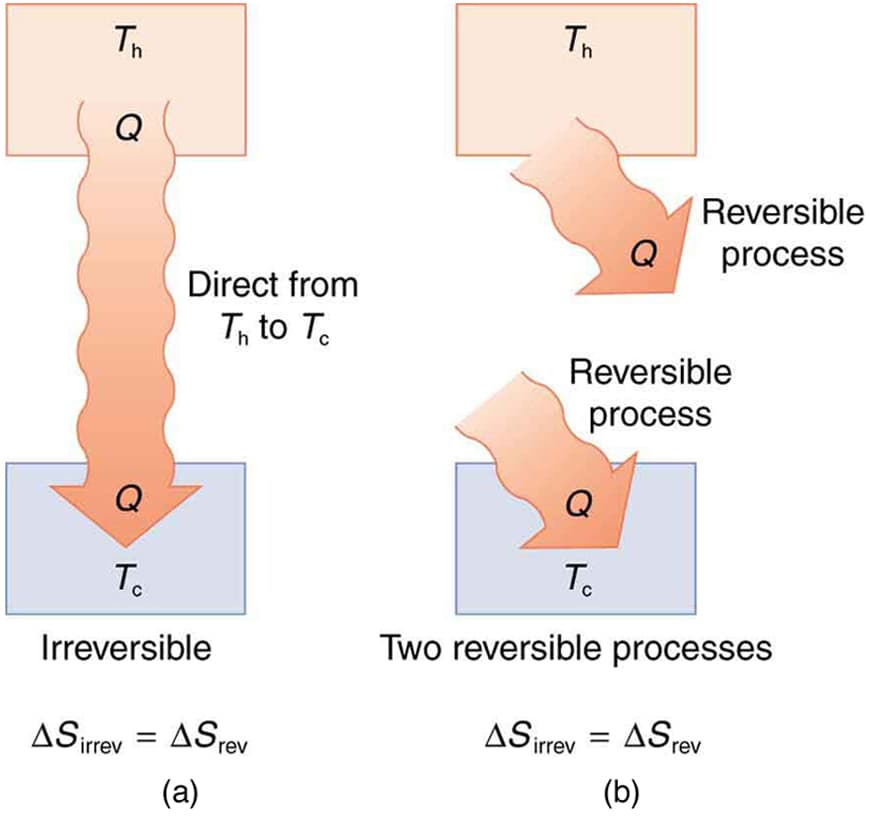

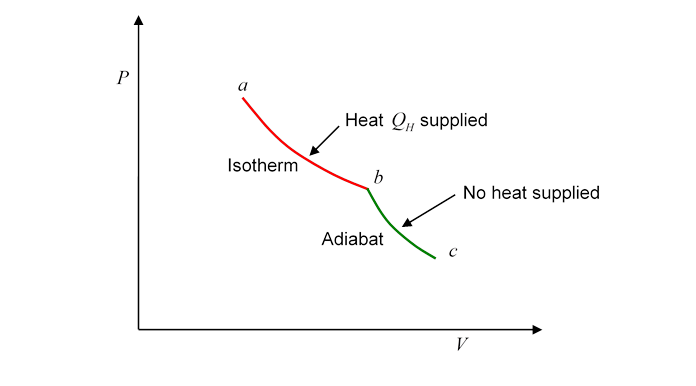

The change in entropy during a thermodynamic process is defined by the relationship:

\Delta S = \frac{Q_{rev}}{T}

Where:

ΔS = change in entropy

Qrev = heat absorbed in a reversible process

T = absolute temperature (Kelvin)

This equation shows that entropy change depends on the amount of heat transferred and the temperature at which the transfer occurs.

3. Statistical Interpretation of Entropy

The Austrian physicist Ludwig Boltzmann connected entropy with molecular behavior.

His famous equation is:

S = k \ln W

Where:

S = entropy

k = Boltzmann constant

W = number of possible microscopic arrangements (microstates)

This equation means that entropy increases when the number of possible molecular arrangements increases.

For example:

- A crystal has very few possible arrangements.

- A gas has many possible arrangements.

Therefore, gases have much higher entropy than solids.

4. Entropy and Disorder

Entropy is often associated with disorder.

Low Entropy

Systems with high order have low entropy.

Examples include:

- Crystalline solids

- Highly organized molecular structures

High Entropy

Systems with greater randomness have higher entropy.

Examples include:

- Gases

- Mixed substances

- Random particle arrangements

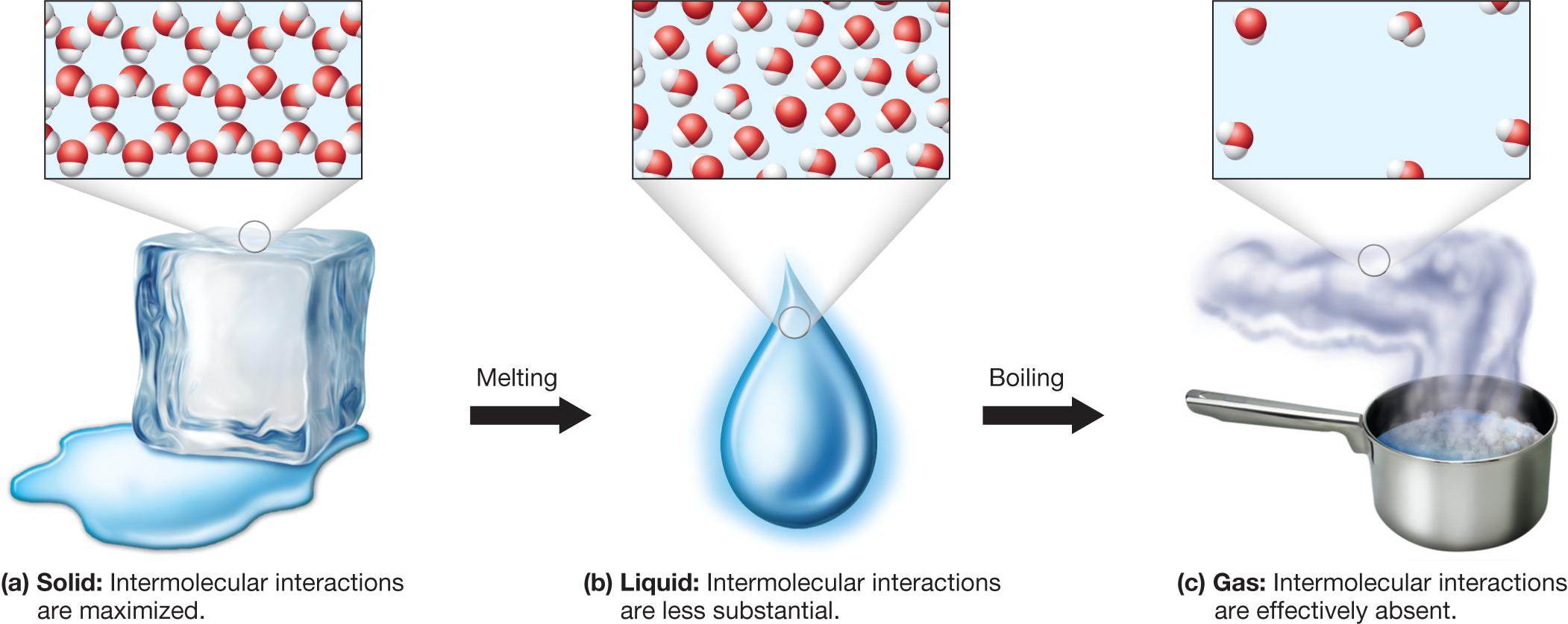

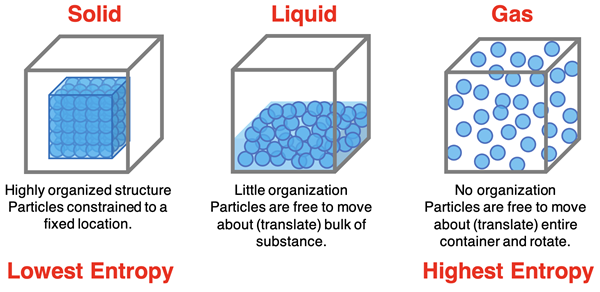

Entropy in Different States of Matter

Entropy increases when matter changes from more ordered states to less ordered states.

Typical order of entropy:

Solid < Liquid < Gas

This means gases have the highest entropy because their molecules move freely and randomly.

5. The Second Law of Thermodynamics

The Second Law of Thermodynamics states that the total entropy of an isolated system always increases over time.

This law explains the natural direction of processes in the universe.

In simpler terms:

Natural processes tend to move toward greater disorder.

Examples of the Second Law

Many everyday phenomena illustrate the second law.

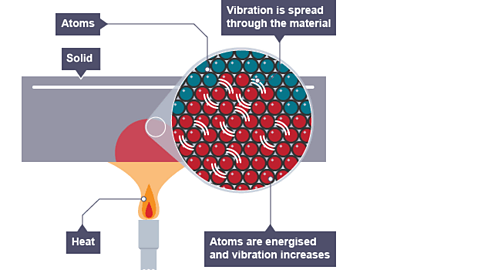

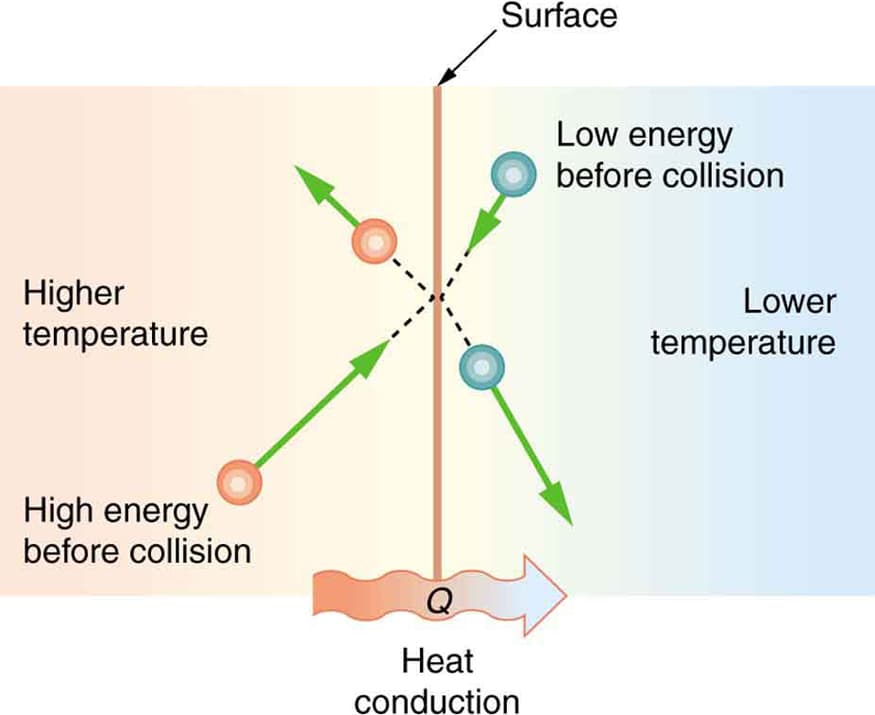

Heat Transfer

Heat flows naturally from hot objects to cold objects.

It does not spontaneously flow in the opposite direction.

Mixing of Gases

When two gases mix, they do not spontaneously separate again.

The mixing process increases entropy.

Ice Melting

Ice melts at room temperature because the liquid state has higher entropy than the solid state.

6. Entropy and Spontaneity

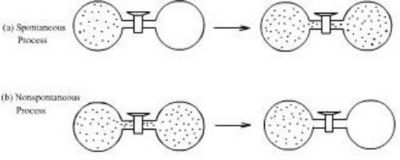

Entropy plays a major role in determining whether a process occurs spontaneously.

A spontaneous process is one that occurs naturally without external intervention.

Examples include:

- Gas expansion

- Dissolution of salt in water

- Heat transfer from hot to cold bodies

Processes that increase entropy tend to occur spontaneously.

However, entropy alone does not fully determine spontaneity. Other factors such as enthalpy also play a role.

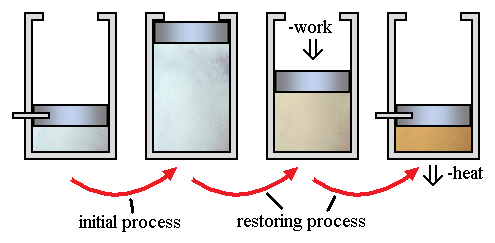

7. Entropy Changes in Physical Processes

Entropy changes occur during phase transitions.

Melting

When a solid melts into a liquid, entropy increases because particles gain freedom of movement.

Example:

Ice melting into water.

Vaporization

When a liquid becomes gas, entropy increases significantly because molecules move freely.

Example:

Water boiling into steam.

Freezing

When a liquid freezes into a solid, entropy decreases because particles become more ordered.

Condensation

Gas turning into liquid decreases entropy.

8. Entropy Changes in Chemical Reactions

Entropy also changes during chemical reactions.

Reactions that produce more gas molecules usually increase entropy.

Examples:

- Decomposition reactions producing gases

- Reactions that increase molecular randomness

Reactions forming solid products generally decrease entropy.

9. Standard Entropy

Standard entropy is the entropy of a substance measured under standard conditions.

Standard conditions typically include:

- Temperature = 298 K

- Pressure = 1 atm

Standard entropy values allow scientists to calculate entropy changes for chemical reactions.

10. Gibbs Free Energy and Entropy

Entropy works together with enthalpy to determine reaction spontaneity.

The relationship is given by the Gibbs Free Energy equation.

\Delta G = \Delta H – T\Delta S

Where:

ΔG = change in Gibbs free energy

ΔH = enthalpy change

T = temperature

ΔS = entropy change

Interpretation of Gibbs Free Energy

If ΔG < 0 → reaction is spontaneous

If ΔG > 0 → reaction is non-spontaneous

If ΔG = 0 → system is in equilibrium

Entropy contributes significantly to determining the value of Gibbs free energy.

11. Entropy in the Universe

The second law of thermodynamics applies to the entire universe.

The total entropy of the universe continually increases.

This principle has major implications in cosmology and physics.

Over long time scales, systems tend to move toward thermodynamic equilibrium, where entropy reaches its maximum.

12. Applications of Entropy

Entropy has many applications across different scientific fields.

Chemical Reactions

Chemists use entropy to predict whether reactions occur spontaneously.

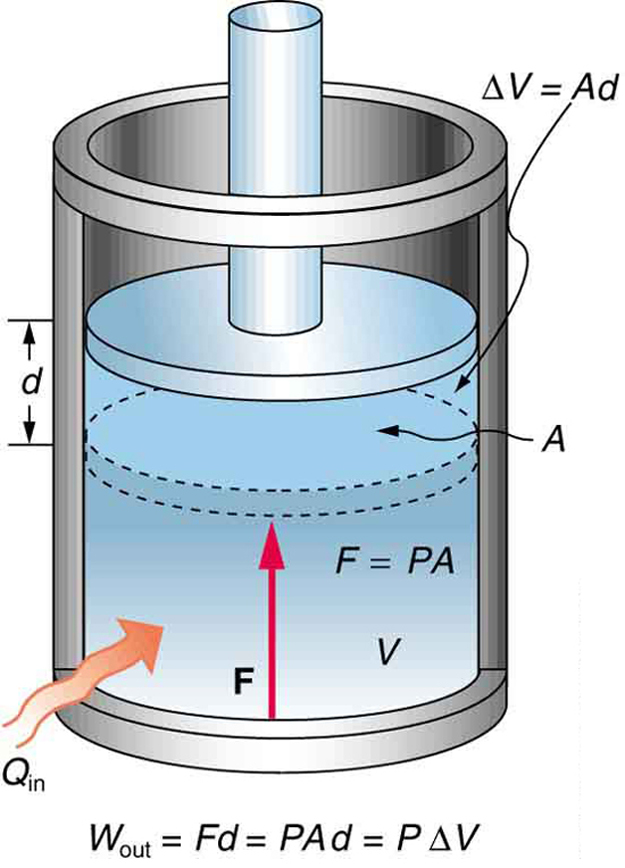

Engineering and Energy Systems

Entropy analysis helps improve efficiency in:

- Heat engines

- Power plants

- Refrigeration systems

Biology

Biological systems maintain low internal entropy by exchanging energy with the environment.

Examples include:

- Metabolism

- Cellular processes

Information Theory

Entropy is used in information theory to measure uncertainty in data systems.

It plays an important role in computer science, cryptography, and data compression.

13. Importance of Entropy

Entropy provides deep insight into the direction of natural processes. It explains why energy transformations occur in a particular way and why certain processes cannot be reversed without external energy input.

The concept also reveals the probabilistic nature of molecular motion and helps bridge the gap between microscopic molecular behavior and macroscopic thermodynamic observations.

Entropy is one of the central ideas connecting physics, chemistry, biology, and information science.

Conclusion

Entropy is a fundamental thermodynamic property that measures the level of disorder or randomness within a system. It plays a key role in understanding how energy is distributed and how physical and chemical processes occur.

The concept of entropy is closely linked to the Second Law of Thermodynamics, which states that the total entropy of an isolated system increases over time. This principle explains why natural processes such as heat transfer, gas expansion, and mixing occur spontaneously.

Entropy changes occur during phase transitions, chemical reactions, and energy transformations. By combining entropy with enthalpy through the Gibbs free energy equation, scientists can predict whether a reaction will occur naturally.

Beyond thermodynamics, entropy has broad applications in fields such as engineering, biology, cosmology, and information theory. Its importance extends far beyond chemistry, making it one of the most powerful and universal concepts in science.

Understanding entropy provides insight into the fundamental laws governing the universe and helps explain the natural tendency of systems to evolve toward greater disorder and energy dispersal.

Tags: