1. Introduction to Eigenvalues and Eigenvectors

Eigenvalues and eigenvectors are fundamental concepts in linear algebra and play a crucial role in many areas of mathematics, physics, engineering, and computer science. They help describe how linear transformations affect vectors in space.

When a matrix transforms a vector, the resulting vector may change in direction and magnitude. However, there are special vectors that only change in magnitude but not in direction when a transformation is applied. These vectors are called eigenvectors, and the factors by which they are scaled are called eigenvalues.

The word eigen comes from German, meaning “own” or “characteristic.” Therefore, eigenvalues and eigenvectors represent the characteristic properties of a matrix transformation.

In many scientific fields, eigenvalues and eigenvectors help analyze systems, identify patterns in data, and simplify complex mathematical problems. They are widely used in areas such as:

- Quantum mechanics

- Machine learning

- Computer graphics

- Structural engineering

- Data analysis

- Control systems

- Image processing

Understanding eigenvalues and eigenvectors allows mathematicians and scientists to study the behavior of complex systems more effectively.

2. Linear Transformations and Matrices

Before understanding eigenvalues and eigenvectors, it is important to understand linear transformations.

A linear transformation is a mathematical operation that transforms vectors from one vector space to another while preserving the operations of addition and scalar multiplication.

In linear algebra, linear transformations are often represented using matrices.

For example, if matrix A acts on vector x, the transformation is written as:

Ax

Where:

A = transformation matrix

x = vector

This multiplication produces a new vector.

Most vectors change both direction and magnitude after transformation.

However, some special vectors only change magnitude while keeping the same direction.

These vectors are eigenvectors.

3. Definition of Eigenvalues and Eigenvectors

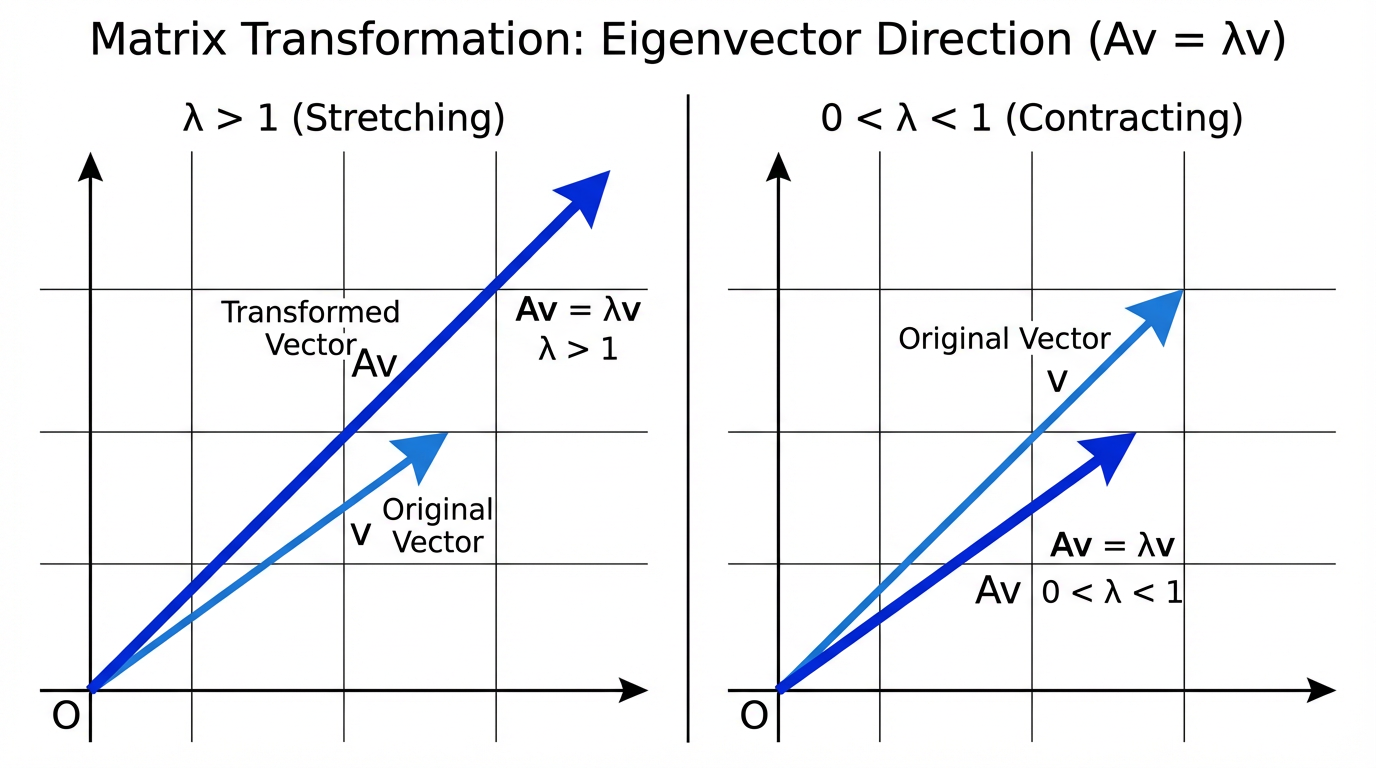

An eigenvector of a matrix is a vector that remains in the same direction after the matrix transformation.

An eigenvalue is the scalar value that represents how much the eigenvector is stretched or compressed.

Mathematically:

A v = λ v

Where:

A = square matrix

v = eigenvector

λ = eigenvalue

This equation means that multiplying matrix A by vector v gives the same vector scaled by λ.

If:

λ > 1 → vector stretches

0 < λ < 1 → vector shrinks

λ < 0 → vector reverses direction

λ = 0 → vector collapses to zero

4. Understanding the Concept Geometrically

Eigenvectors represent directions that remain unchanged during transformation.

When a transformation matrix acts on space:

- Most vectors rotate or change direction

- Eigenvectors only scale in magnitude

Imagine stretching a rubber sheet.

Some directions stretch directly without rotating.

These directions represent eigenvectors.

The stretching factor is the eigenvalue.

5. Finding Eigenvalues

To calculate eigenvalues of a matrix, we start from the equation:

A v = λ v

Rewrite it as:

A v − λ v = 0

Factor out v:

(A − λI)v = 0

Where:

I = identity matrix

For non-zero solutions:

det(A − λI) = 0

This equation is called the characteristic equation.

Solving it gives eigenvalues.

6. Example of Eigenvalue Calculation

Consider matrix:

A =

[2 1]

[1 2]

Step 1: Compute A − λI

A − λI =

[2−λ 1]

[1 2−λ]

Step 2: Find determinant

|2−λ 1|

|1 2−λ|

= (2−λ)(2−λ) − (1×1)

= (2−λ)² − 1

Expand:

= λ² − 4λ + 3

Step 3: Solve

λ² − 4λ + 3 = 0

(λ − 1)(λ − 3) = 0

Eigenvalues:

λ₁ = 1

λ₂ = 3

7. Finding Eigenvectors

Once eigenvalues are found, eigenvectors can be calculated.

Use equation:

(A − λI)v = 0

Example:

For eigenvalue λ = 3

A − 3I =

[-1 1]

[1 -1]

Solve system:

- x + y = 0

y = x

Eigenvector:

[1

1]

8. Eigenvalues of Special Matrices

Certain matrices have simple eigenvalues.

Diagonal Matrix

Eigenvalues are diagonal elements.

Example:

[3 0 0]

[0 5 0]

[0 0 7]

Eigenvalues:

3, 5, 7

Identity Matrix

All eigenvalues = 1

Zero Matrix

All eigenvalues = 0

Triangular Matrix

Eigenvalues are diagonal elements.

9. Properties of Eigenvalues and Eigenvectors

Property 1

Sum of eigenvalues equals trace of matrix.

Trace = sum of diagonal elements.

Property 2

Product of eigenvalues equals determinant.

Property 3

Eigenvectors corresponding to different eigenvalues are linearly independent.

Property 4

Scaling a matrix scales eigenvalues.

If matrix multiplied by k:

Eigenvalues multiply by k.

Property 5

Eigenvalues of transpose matrix are the same.

10. Characteristic Polynomial

The equation:

det(A − λI) = 0

produces a polynomial called the characteristic polynomial.

Example:

λ² − 4λ + 3

Roots of this polynomial are eigenvalues.

11. Diagonalization of Matrices

Some matrices can be expressed in diagonal form.

A = PDP⁻¹

Where:

D = diagonal matrix of eigenvalues

P = matrix of eigenvectors

Diagonalization simplifies calculations.

12. Applications in Physics

Eigenvalues are widely used in physics.

Quantum Mechanics

Energy levels of atoms are eigenvalues of operators.

Example:

Schrödinger equation

Vibrations and Oscillations

Eigenvalues determine natural frequencies of structures.

Used in:

- bridges

- buildings

- mechanical systems

13. Applications in Engineering

Eigenvalues help analyze:

- stability of systems

- mechanical vibrations

- electrical circuits

In control systems, eigenvalues determine whether a system is stable.

14. Applications in Machine Learning

Eigenvalues and eigenvectors are used in:

Principal Component Analysis (PCA)

PCA reduces dimensionality of data.

Eigenvectors determine principal directions.

Eigenvalues measure importance of each component.

Face Recognition

Eigenfaces technique uses eigenvectors to represent facial images.

15. Applications in Computer Graphics

Eigenvalues help in:

- geometric transformations

- animation

- image compression

Used to manipulate objects in 3D space.

16. Applications in Network Analysis

Eigenvectors help analyze networks.

Example:

Google PageRank algorithm uses eigenvectors to rank web pages.

17. Eigenvalues in Differential Equations

Eigenvalues help solve systems of differential equations.

Example:

Population models

Electrical circuits

Heat transfer problems

18. Eigenvalue Decomposition

Eigenvalue decomposition expresses matrix as:

A = VΛV⁻¹

Where:

Λ = diagonal matrix of eigenvalues

V = eigenvector matrix

Used in many numerical algorithms.

19. Singular Value Decomposition

SVD is related to eigenvalues.

It is used in:

- recommendation systems

- image compression

- natural language processing

20. Importance of Eigenvalues and Eigenvectors

Eigenvalues and eigenvectors help simplify complex systems.

They allow:

- analysis of transformations

- dimensionality reduction

- stability analysis

- vibration analysis

- pattern recognition

They are one of the most powerful tools in linear algebra.

Conclusion

Eigenvalues and eigenvectors are central concepts in linear algebra that describe the fundamental behavior of matrix transformations. They identify special directions in space that remain unchanged during transformation while only being scaled by a factor known as the eigenvalue.

These concepts provide deep insights into the structure of matrices and are essential for solving complex mathematical problems. Eigenvalues and eigenvectors are widely used in physics, engineering, computer science, and data science, enabling applications such as vibration analysis, machine learning, image processing, and network analysis.

By understanding eigenvalues and eigenvectors, researchers and engineers can simplify complicated systems, analyze stability, and uncover patterns in data. Their importance continues to grow as modern technology increasingly relies on advanced mathematical methods.