Introduction to Probability Distributions

Probability distributions are fundamental concepts in probability theory and statistics that describe how the values of a random variable are distributed. In simple terms, a probability distribution provides a mathematical description of the likelihood of different outcomes in an experiment or random process.

In many real-world situations, outcomes are uncertain. For example, when tossing a coin, rolling a die, or measuring rainfall in a city, the exact result cannot always be predicted with certainty. However, probability distributions allow us to understand the pattern of possible outcomes and assign probabilities to them.

A probability distribution tells us:

- What values a random variable can take

- How likely each value is to occur

For instance, when rolling a fair six-sided die, the probability of each number from 1 to 6 occurring is equal. The probability distribution of the die shows that each outcome has a probability of 1/6.

Probability distributions are widely used in many fields including mathematics, statistics, economics, engineering, physics, finance, biology, and machine learning. They help researchers model uncertainty, analyze data, and make predictions about future events.

Understanding probability distributions is essential for advanced statistical analysis, hypothesis testing, and decision-making under uncertainty.

Random Variables

Before studying probability distributions, it is important to understand the concept of random variables.

A random variable is a variable whose value is determined by the outcome of a random experiment.

For example:

- The number obtained when rolling a die

- The number of customers entering a store in an hour

- The amount of rainfall in a day

- The height of individuals in a population

Random variables can take different numerical values depending on the outcome of the experiment.

There are two main types of random variables:

- Discrete Random Variables

- Continuous Random Variables

These types lead to two major categories of probability distributions.

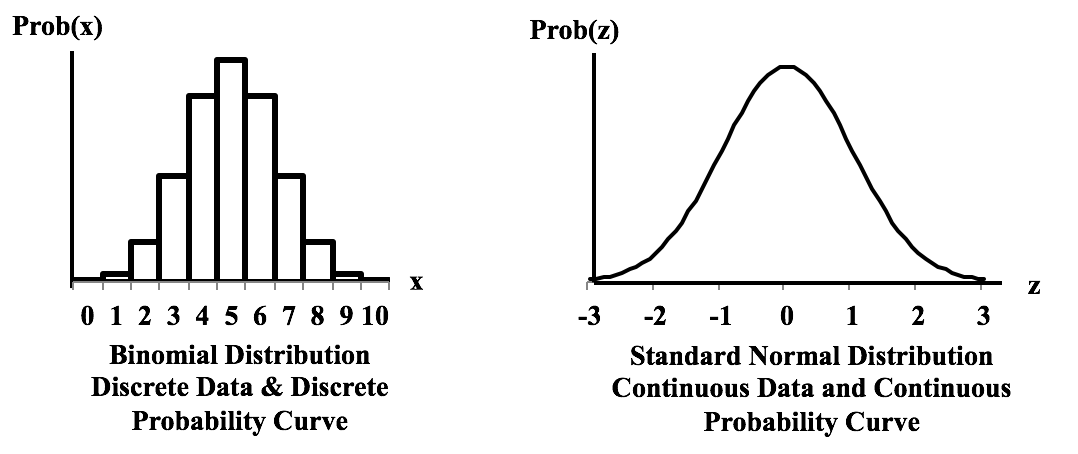

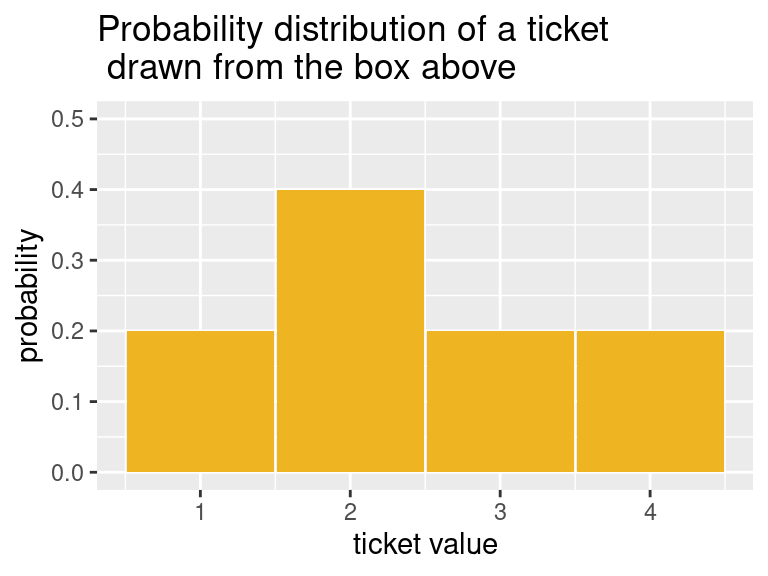

Discrete Probability Distributions

A discrete probability distribution describes probabilities for random variables that take countable values.

Examples of discrete random variables include:

- number of heads in coin tosses

- number of defective products in a batch

- number of students in a classroom

Discrete probability distributions use a probability mass function (PMF).

The PMF gives the probability that a random variable equals a particular value.

Example:

Suppose a fair coin is tossed twice. Possible outcomes are:

HH, HT, TH, TT

Let X represent the number of heads.

Possible values:

0, 1, 2

The probability distribution is:

P(X = 0) = 1/4

P(X = 1) = 2/4

P(X = 2) = 1/4

This table represents the probability distribution.

The probabilities must satisfy two conditions:

- Each probability is between 0 and 1.

- The sum of probabilities equals 1.

Discrete probability distributions are often represented using bar charts.

Continuous Probability Distributions

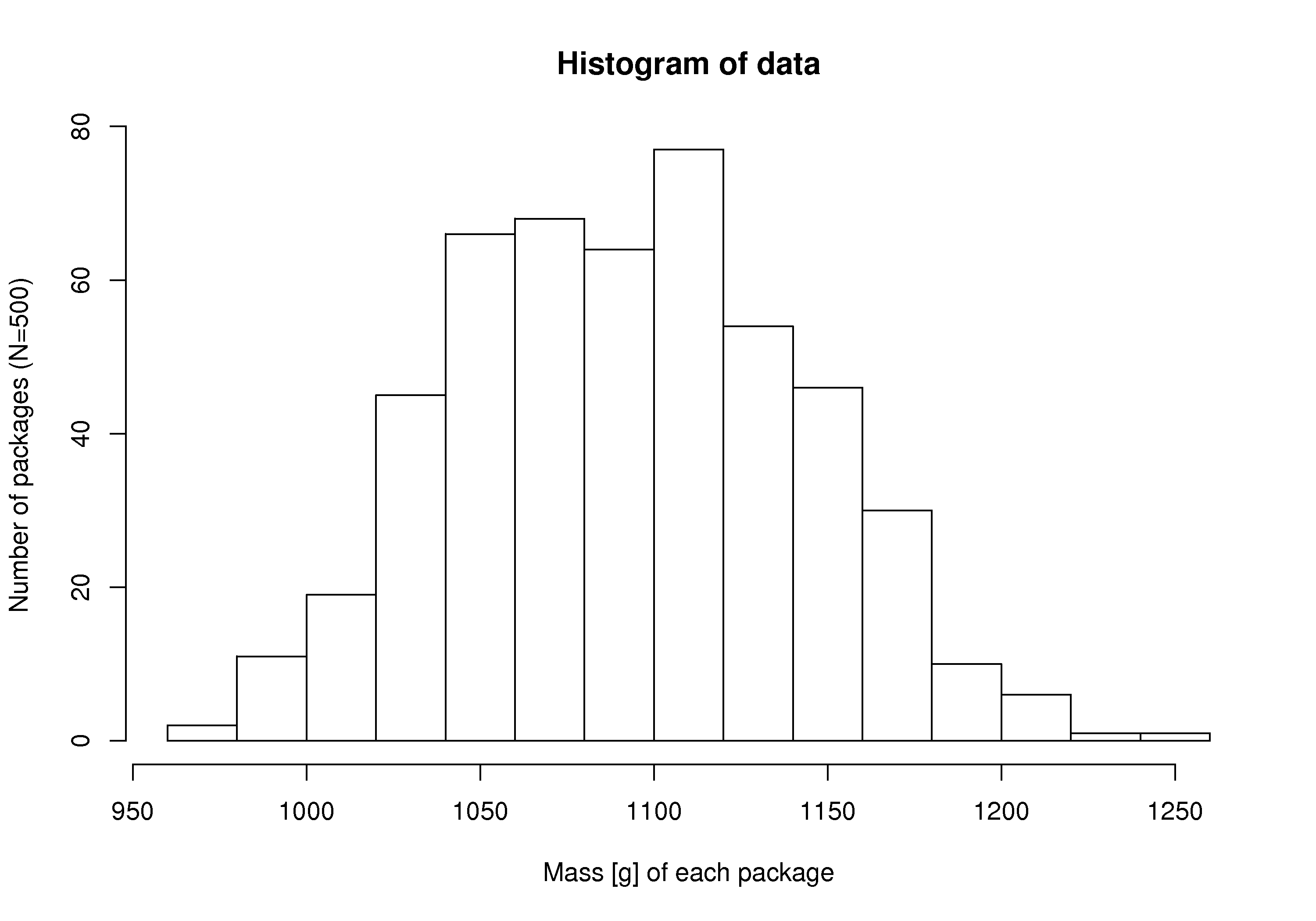

Continuous probability distributions describe random variables that can take infinitely many values within a given range.

Examples include:

- height of people

- temperature

- time required to complete a task

- weight of objects

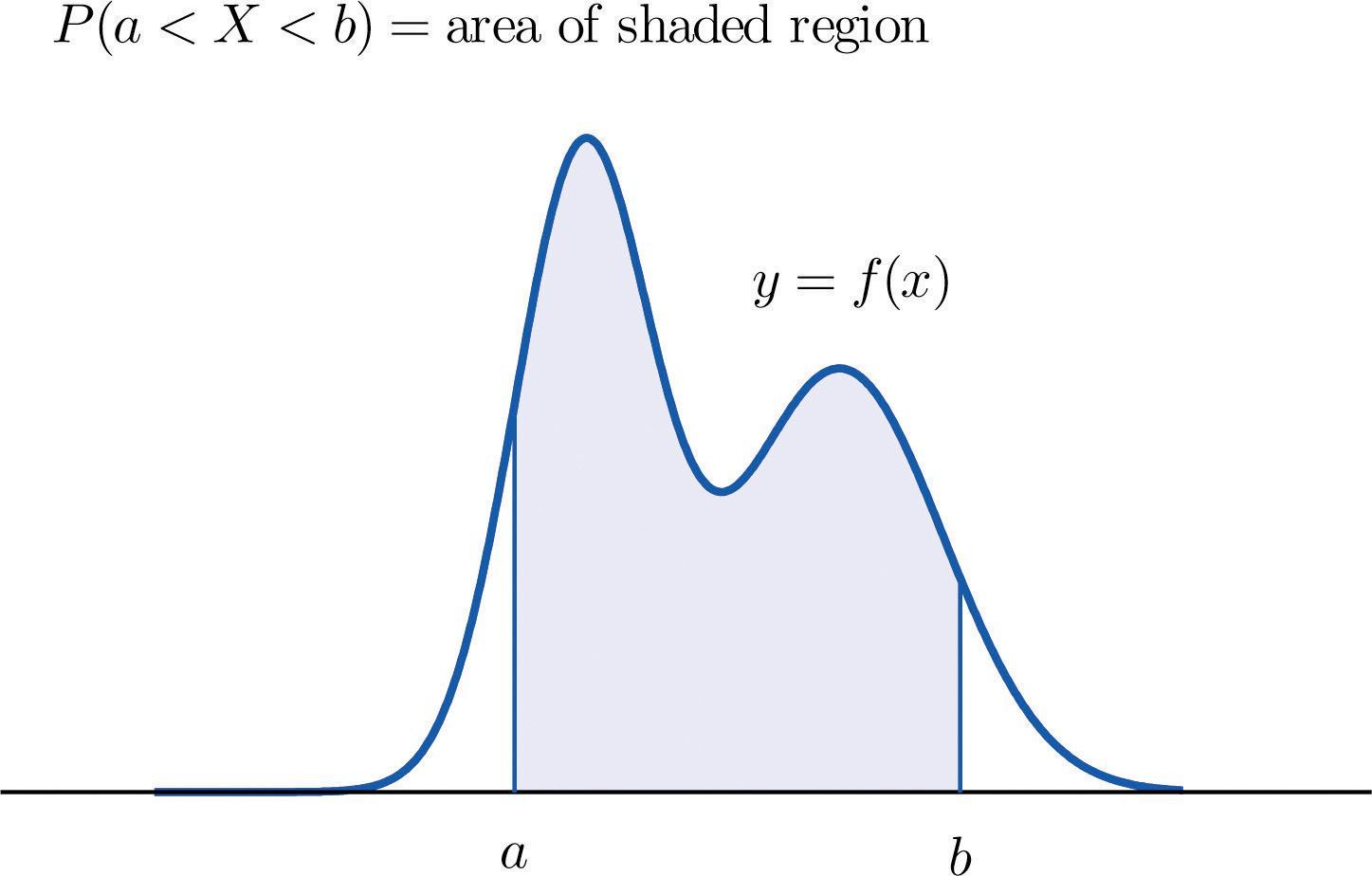

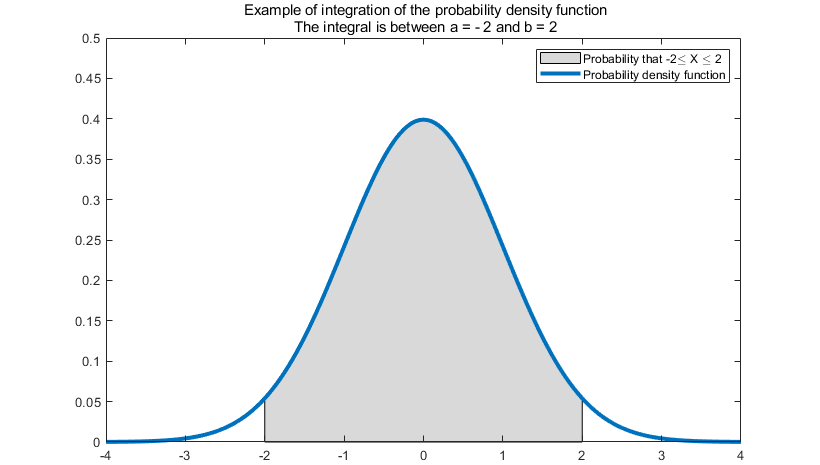

Unlike discrete distributions, continuous distributions use a probability density function (PDF).

The probability of a value is determined by the area under the curve.

For continuous distributions:

P(a ≤ X ≤ b) = area under the curve between a and b.

The total area under the curve equals 1.

Continuous probability distributions are represented using smooth curves rather than bars.

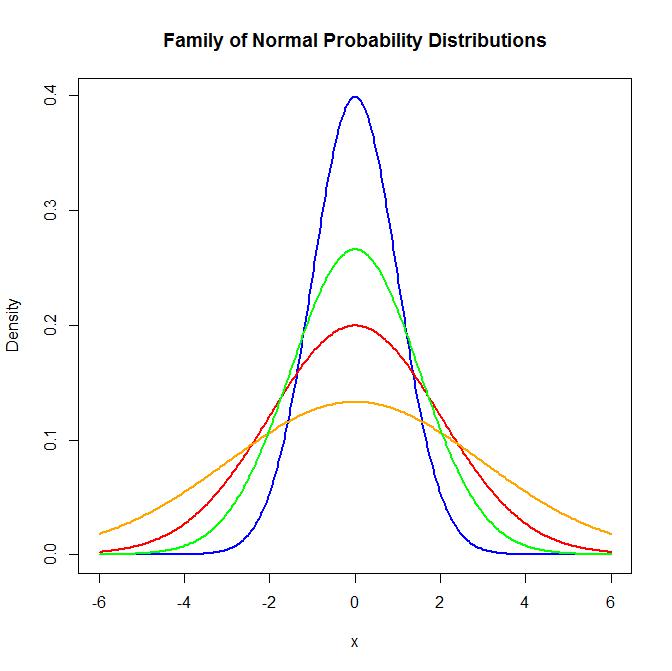

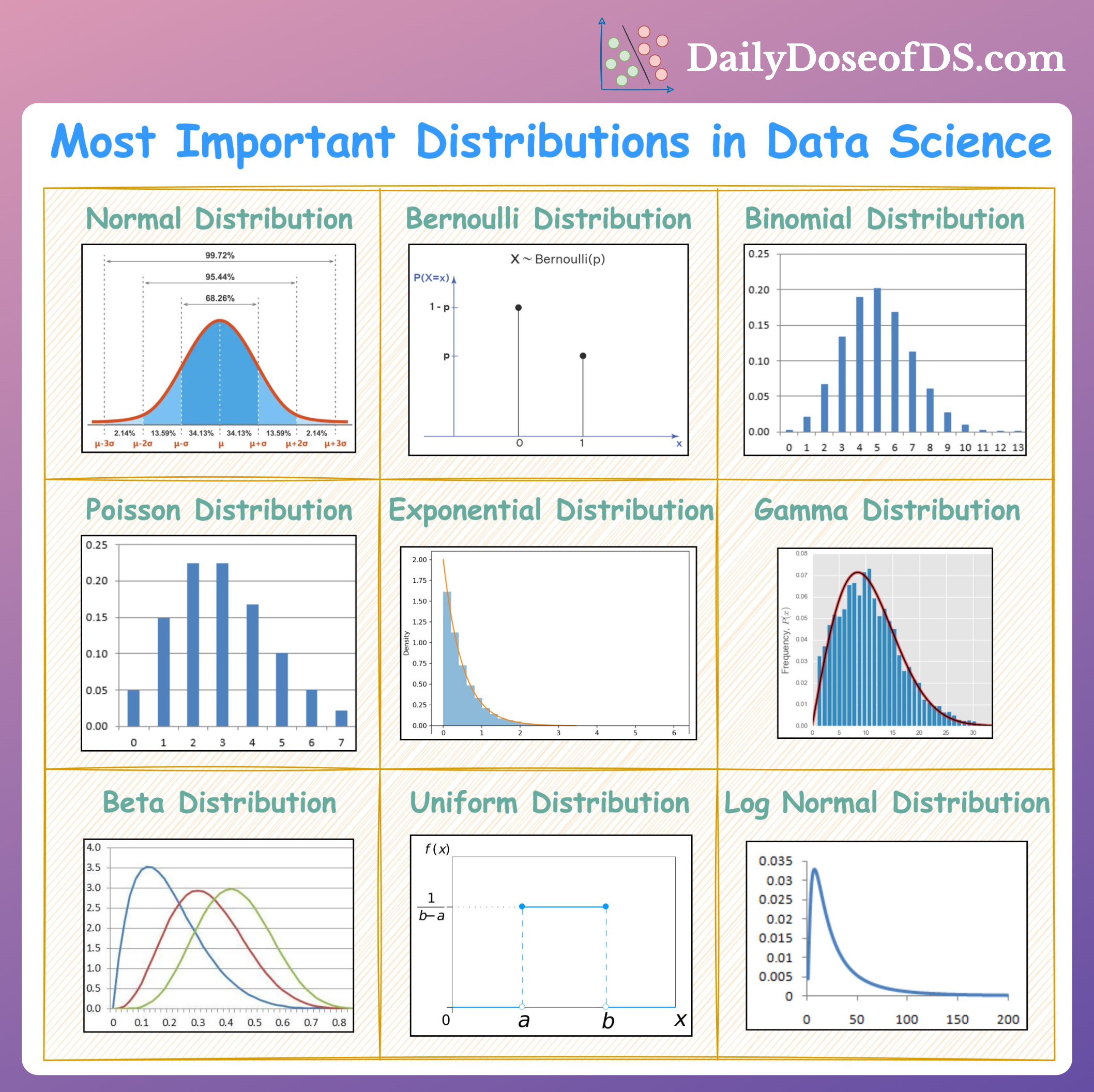

Normal Distribution

The normal distribution is one of the most important probability distributions in statistics.

It is also called the Gaussian distribution.

The normal distribution has the following characteristics:

- symmetric bell-shaped curve

- mean, median, and mode are equal

- data is concentrated around the mean

The probability density function of the normal distribution is:

f(x) = (1 / (σ√2π)) e^(-(x−μ)² / 2σ²)

Where:

μ = mean

σ = standard deviation

One important property of the normal distribution is the empirical rule.

According to this rule:

- 68% of data lies within 1 standard deviation of the mean

- 95% lies within 2 standard deviations

- 99.7% lies within 3 standard deviations

Normal distribution appears in many natural phenomena such as heights, exam scores, and measurement errors.

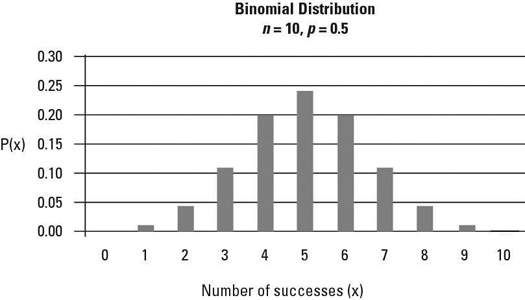

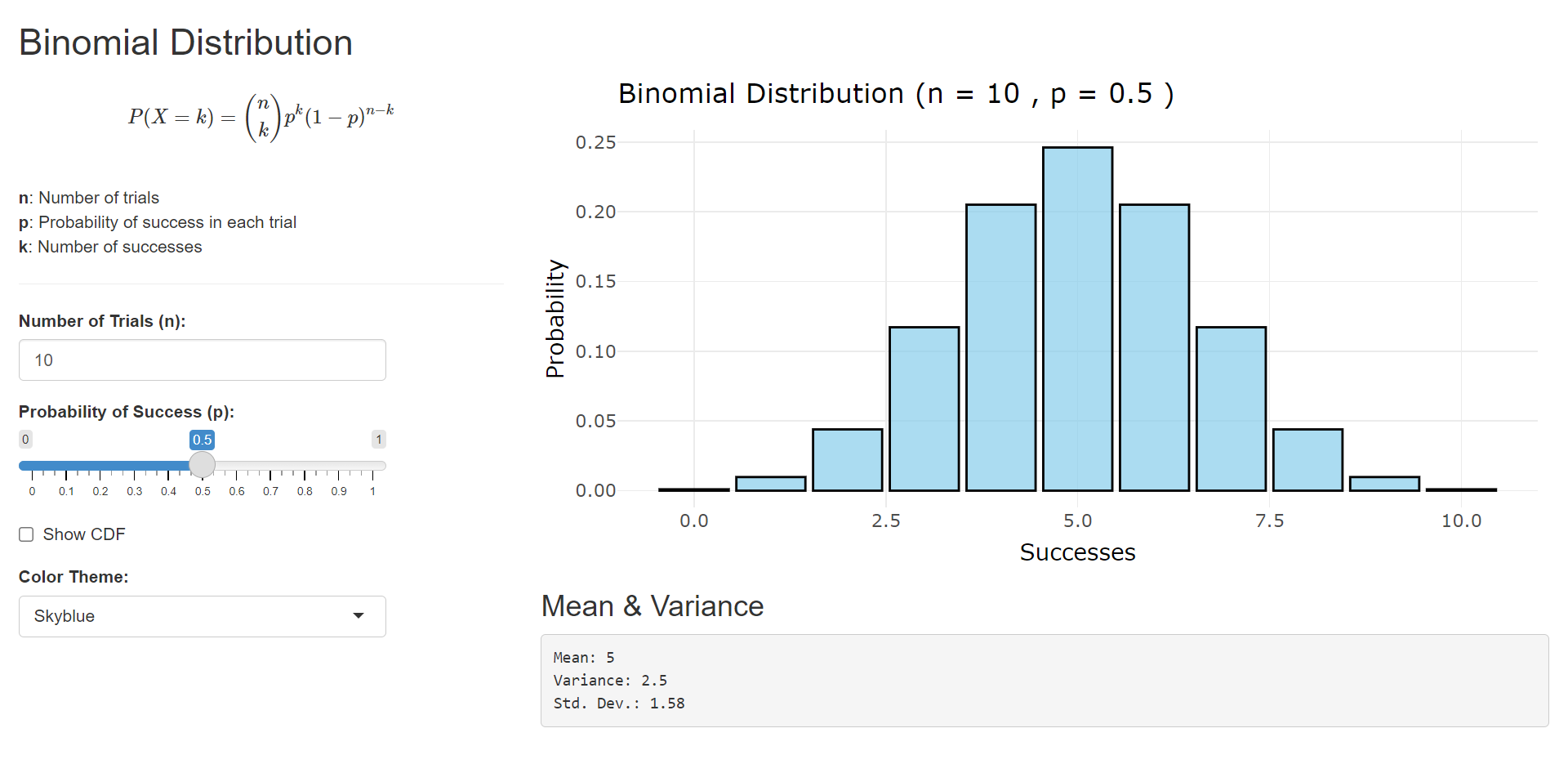

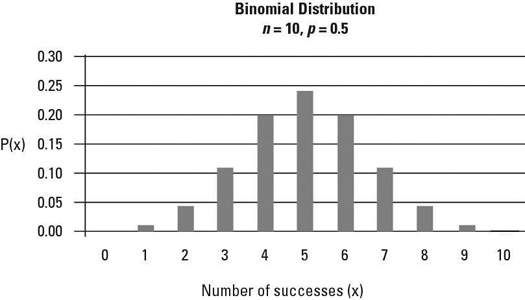

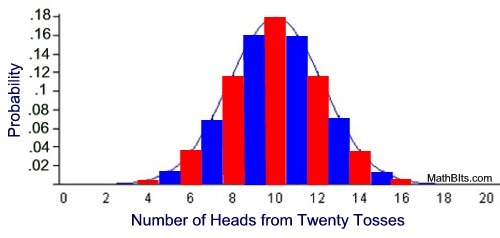

Binomial Distribution

The binomial distribution is a discrete probability distribution that describes the number of successes in a fixed number of independent trials.

Conditions for binomial distribution:

- Fixed number of trials

- Each trial has two outcomes (success or failure)

- Probability of success is constant

The probability formula is:

P(X = k) = (nCk) p^k (1 − p)^(n − k)

Where:

n = number of trials

k = number of successes

p = probability of success

Example:

If a coin is tossed 5 times, the binomial distribution can determine the probability of obtaining exactly 3 heads.

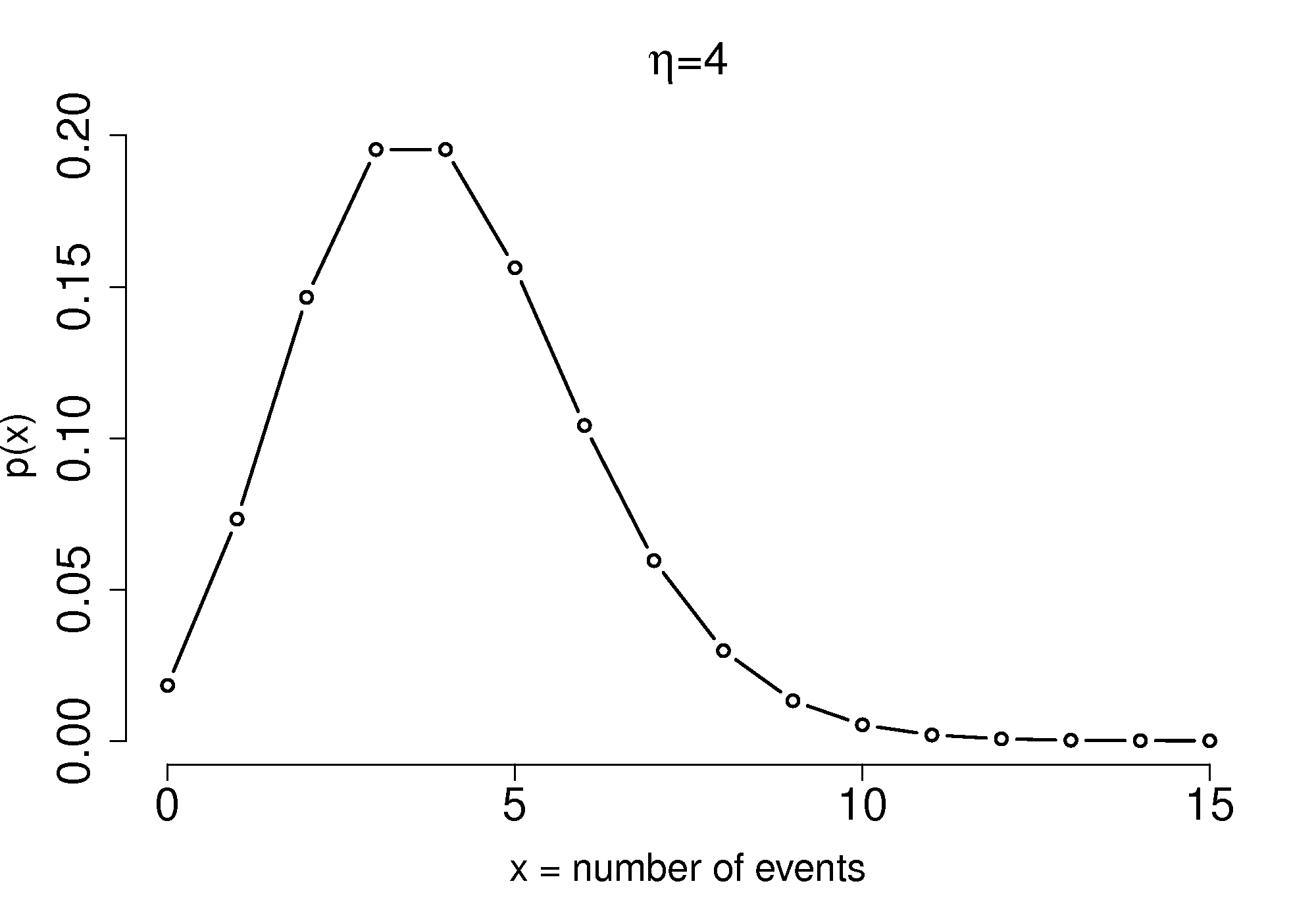

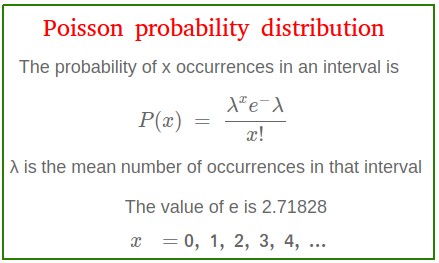

Poisson Distribution

The Poisson distribution models the number of events occurring in a fixed interval of time or space.

Examples include:

- number of phone calls received in an hour

- number of accidents on a road

- number of typing errors on a page

The formula for Poisson distribution is:

P(X = k) = (λ^k e^−λ) / k!

Where:

λ = average number of events

k = number of occurrences

The Poisson distribution is commonly used for modeling rare events.

Uniform Distribution

The uniform distribution occurs when all outcomes are equally likely.

Example:

Rolling a fair die.

Each number from 1 to 6 has equal probability.

In continuous uniform distribution, the probability density is constant across the interval.

Uniform distributions are used in simulations and computer algorithms.

Exponential Distribution

The exponential distribution models the time between events in a Poisson process.

Examples include:

- time between arrivals of customers

- time until a machine fails

- waiting time for a bus

The probability density function is:

f(x) = λ e^(-λx)

Where λ is the rate parameter.

This distribution is widely used in reliability analysis and queueing theory.

Applications of Probability Distributions

Probability distributions are used in many practical applications.

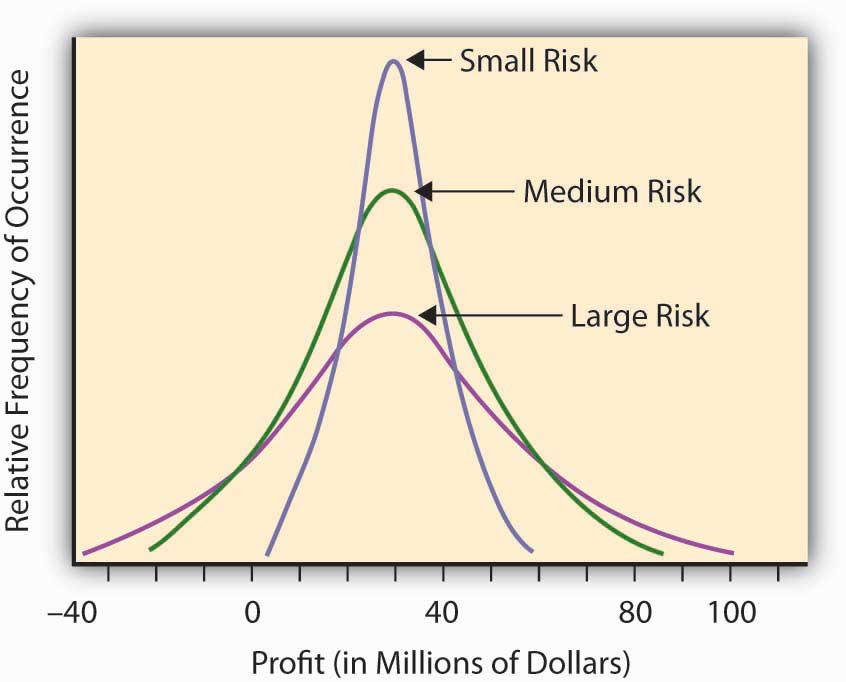

Finance

Used to model stock market returns and financial risk.

Engineering

Used in reliability analysis and quality control.

Medicine

Used to analyze clinical trials and disease spread.

Data Science

Machine learning algorithms rely on probability distributions.

Economics

Used to study income distribution and market behavior.

These applications highlight the importance of probability distributions.

Importance of Probability Distributions

Probability distributions play a central role in statistics and probability theory.

They help:

- model uncertainty

- analyze random phenomena

- make predictions

- support statistical inference

Many advanced statistical methods depend on probability distributions.

Understanding them allows researchers to interpret data more effectively.

Conclusion

Probability distributions provide mathematical models that describe how random variables behave. They help assign probabilities to possible outcomes and explain how data values are distributed.

There are two main categories of probability distributions: discrete and continuous. Important distributions include binomial, Poisson, normal, uniform, and exponential distributions.

These distributions are essential tools in mathematics, statistics, science, engineering, economics, and data analysis. By understanding probability distributions, researchers can analyze uncertainty, model real-world phenomena, and make informed decisions.

Probability distributions form the foundation of modern statistics and are crucial for studying randomness and variability in data.