Introduction to Probability

Probability is a branch of mathematics that deals with uncertainty and the likelihood of events occurring. It provides a numerical measure that describes how likely an event is to happen in a random experiment. Probability plays an essential role in statistics, decision making, risk assessment, scientific research, and many real-world applications.

In everyday life, people encounter uncertainty frequently. For example, predicting weather conditions, determining the chance of winning a game, or estimating the likelihood of a disease occurring are all situations involving probability. Mathematical probability allows us to analyze such situations logically and quantitatively.

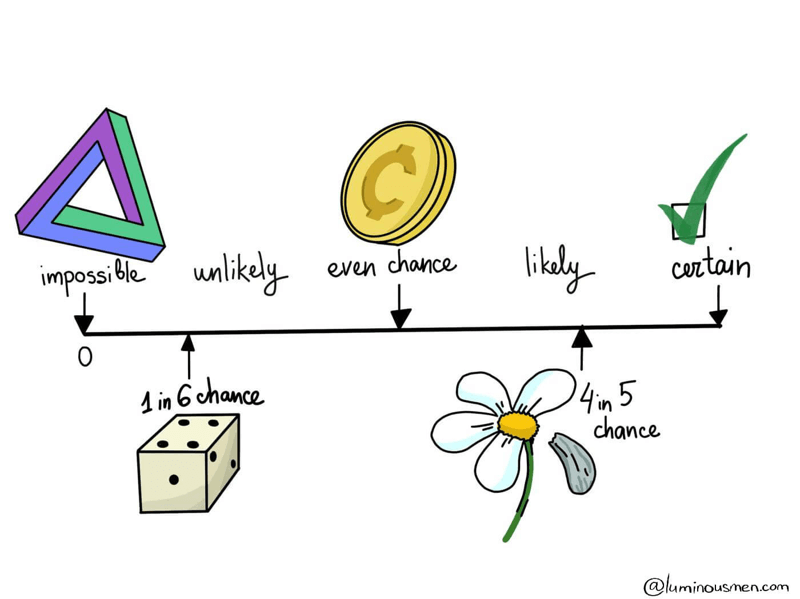

Probability values range between 0 and 1, where:

- 0 represents an impossible event

- 1 represents a certain event

Any event whose probability lies between these values indicates varying levels of likelihood.

The study of probability began in the seventeenth century when mathematicians started analyzing games of chance. Today, probability theory has become an essential component of mathematics, statistics, economics, engineering, and computer science.

Understanding basic probability rules helps students analyze random events, interpret data, and make predictions about uncertain outcomes.

Random Experiments and Sample Space

Random Experiment

A random experiment is an experiment or process whose outcome cannot be predicted with certainty.

Examples include:

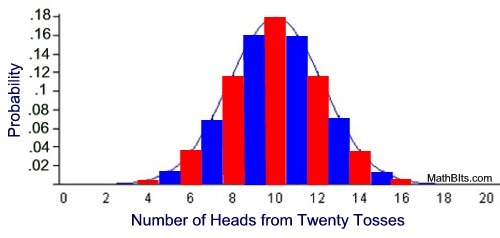

- tossing a coin

- rolling a die

- drawing a card from a deck

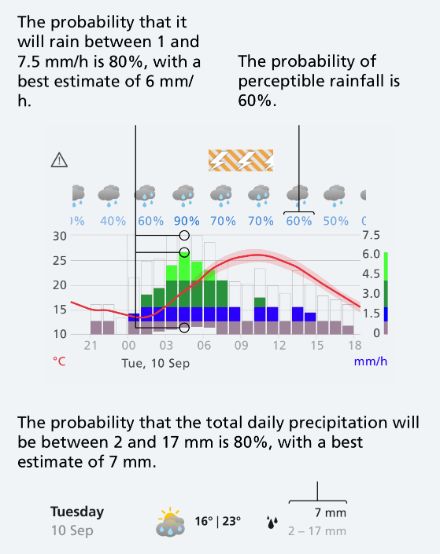

- measuring rainfall in a city

Even though the exact outcome is unknown, the possible outcomes are known.

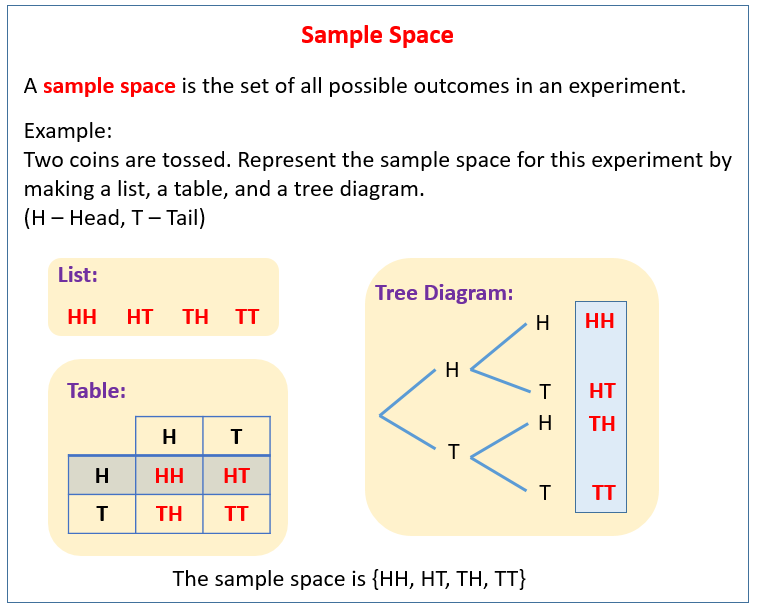

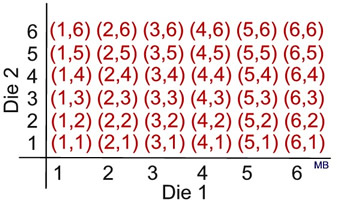

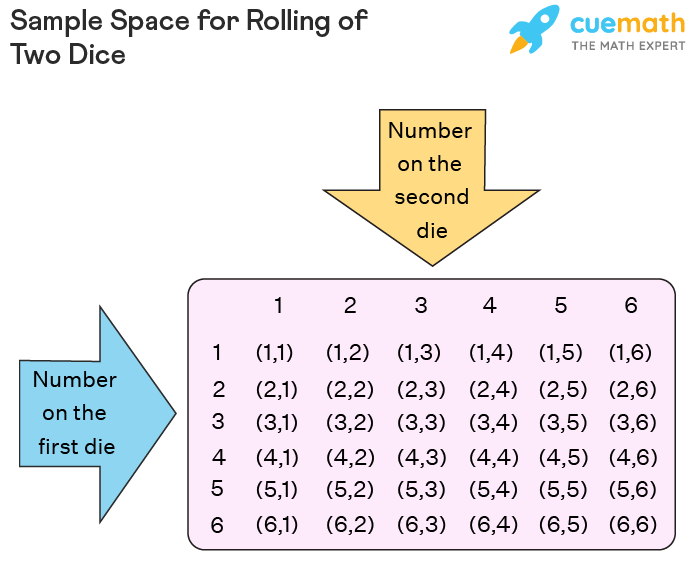

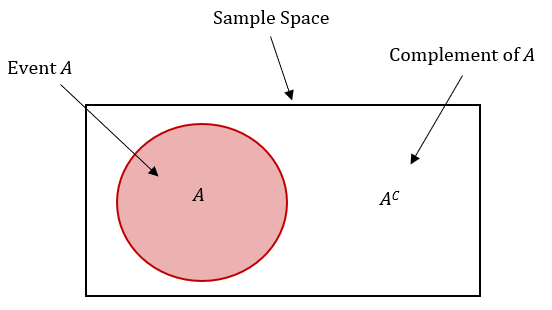

Sample Space

The sample space is the set of all possible outcomes of a random experiment.

Example:

When tossing a coin:

S = {H, T}

Where:

H = Head

T = Tail

When rolling a six-sided die:

S = {1, 2, 3, 4, 5, 6}

The sample space forms the basis for calculating probabilities.

Event

An event is a subset of the sample space.

Example:

Event A: Getting an even number when rolling a die.

A = {2, 4, 6}

Events are the outcomes we are interested in analyzing.

Classical Definition of Probability

The classical definition of probability is based on equally likely outcomes.

If an event A occurs in m ways out of n possible outcomes, the probability of event A is:

P(A) = m / n

Where:

- m = number of favorable outcomes

- n = total number of possible outcomes

Example:

Consider rolling a die.

Probability of getting a 3:

P(3) = 1 / 6

Probability of getting an even number:

Even numbers = {2, 4, 6}

P(Even) = 3 / 6 = 1 / 2

This formula is used when outcomes are equally likely.

Basic Probability Rules

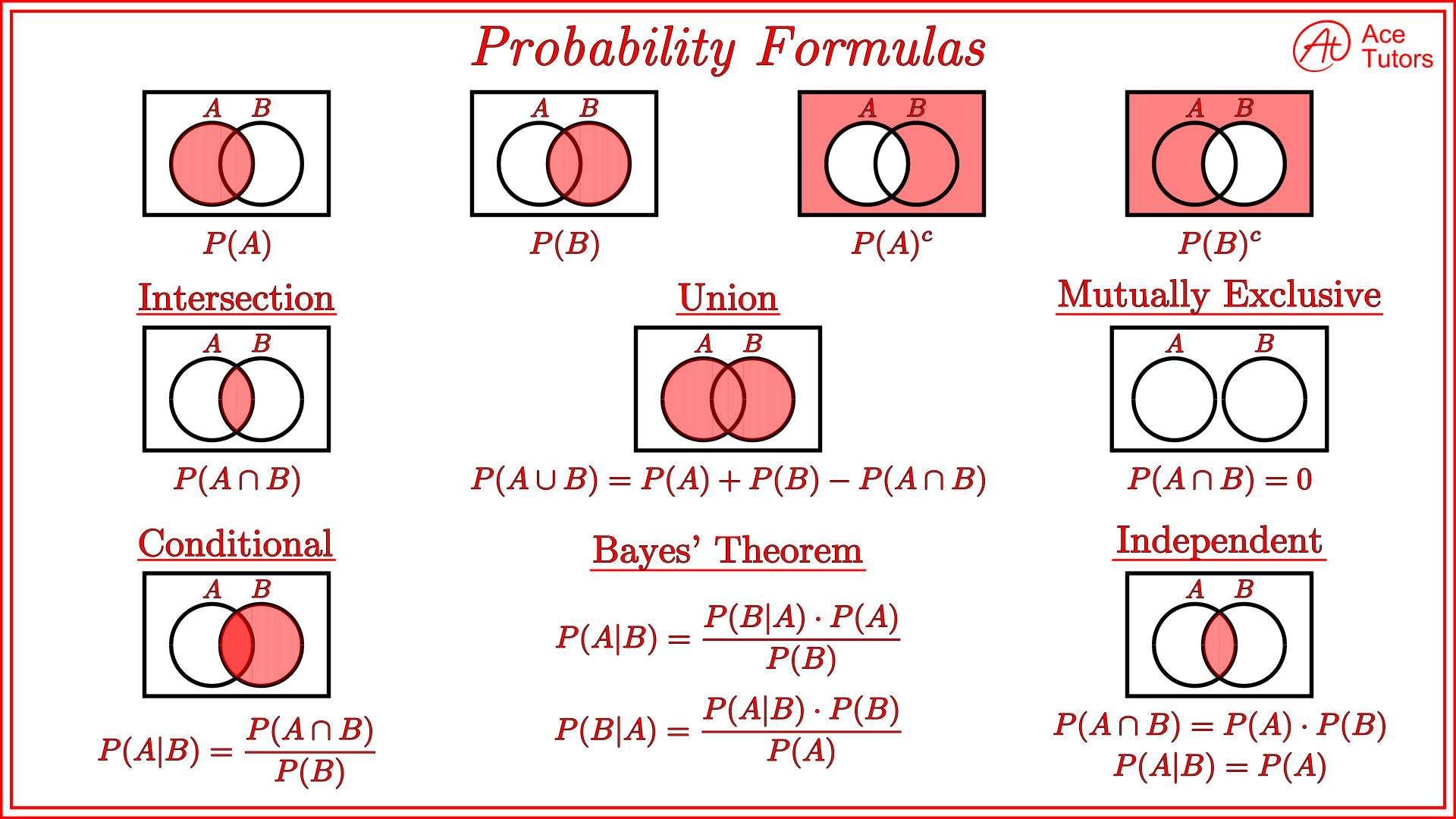

Probability theory is governed by several fundamental rules that help calculate probabilities for different types of events.

These rules form the foundation of probability calculations.

The main probability rules include:

- Range rule

- Complement rule

- Addition rule

- Multiplication rule

- Conditional probability rule

Each rule helps solve different types of probability problems.

Rule 1: Range Rule of Probability

The probability of any event must lie between 0 and 1.

Mathematically:

0 ≤ P(A) ≤ 1

Examples:

Impossible event:

P(A) = 0

Certain event:

P(A) = 1

Example:

Probability that the sun rises tomorrow ≈ 1.

Probability of drawing a red ball from a bag with only blue balls = 0.

This rule ensures probabilities remain within valid limits.

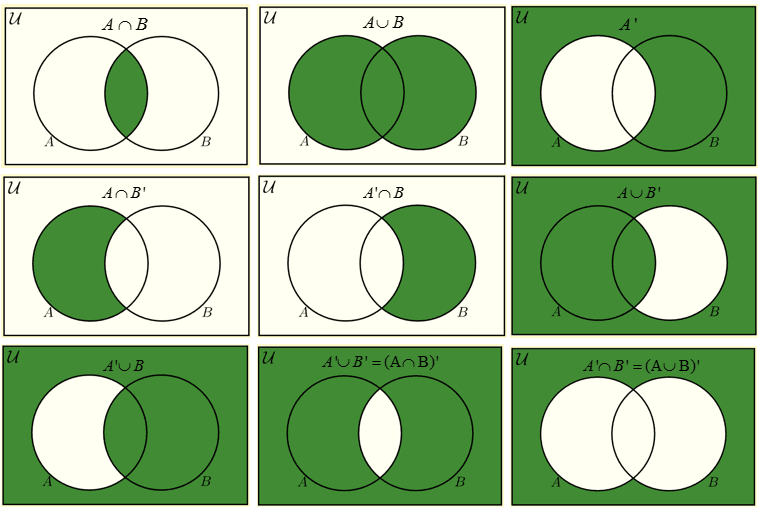

Rule 2: Complement Rule

The complement of an event represents outcomes where the event does not occur.

If event A occurs with probability P(A), then its complement is denoted by A’.

Complement rule:

P(A’) = 1 − P(A)

Example:

Probability of getting a head when tossing a coin:

P(H) = 1/2

Probability of not getting a head:

P(H’) = 1 − 1/2 = 1/2

Another example:

If the probability of rain tomorrow is 0.3, the probability that it will not rain is:

0.7

Complement rule simplifies probability calculations.

Rule 3: Addition Rule of Probability

The addition rule is used to calculate the probability that at least one of two events occurs.

For Mutually Exclusive Events

If events A and B cannot occur simultaneously:

P(A ∪ B) = P(A) + P(B)

Example:

Rolling a die.

Event A: Getting 1

Event B: Getting 2

P(A ∪ B) = 1/6 + 1/6 = 1/3

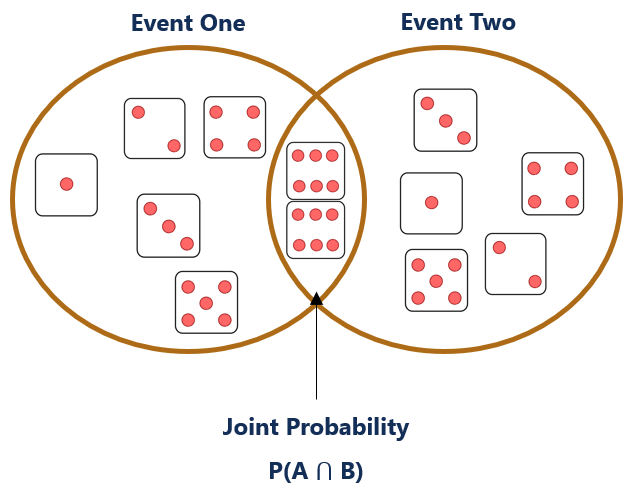

For Non-Mutually Exclusive Events

If events overlap:

P(A ∪ B) = P(A) + P(B) − P(A ∩ B)

Where:

A ∩ B represents the intersection of events.

This rule avoids double-counting shared outcomes.

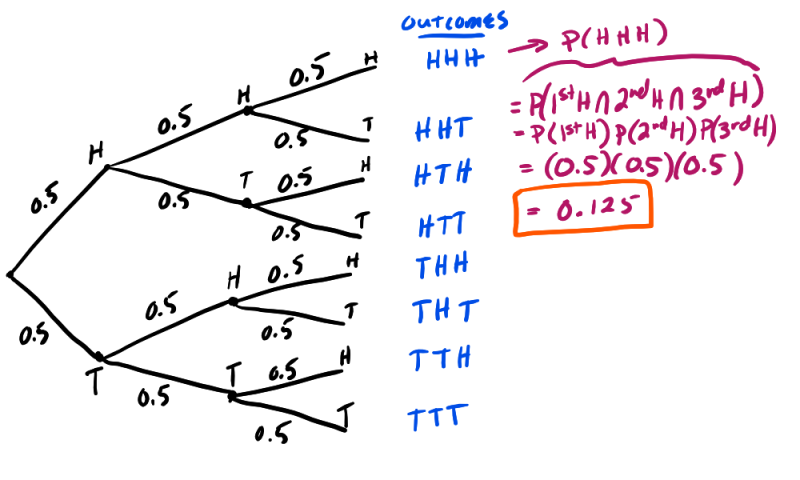

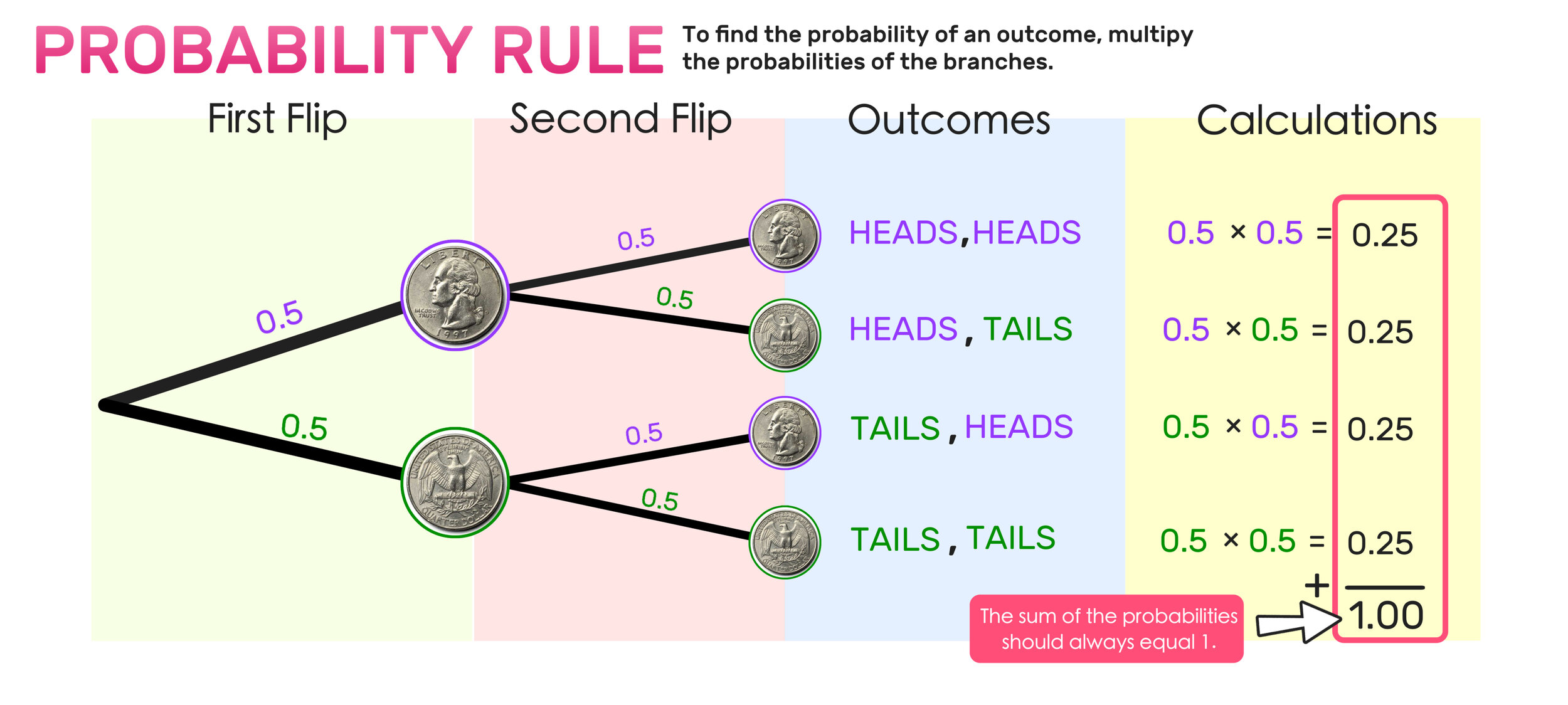

Rule 4: Multiplication Rule of Probability

The multiplication rule calculates the probability that two events occur together.

Independent Events

Events are independent if the occurrence of one does not affect the other.

Formula:

P(A ∩ B) = P(A) × P(B)

Example:

Tossing two coins.

Probability of two heads:

P(HH) = 1/2 × 1/2 = 1/4

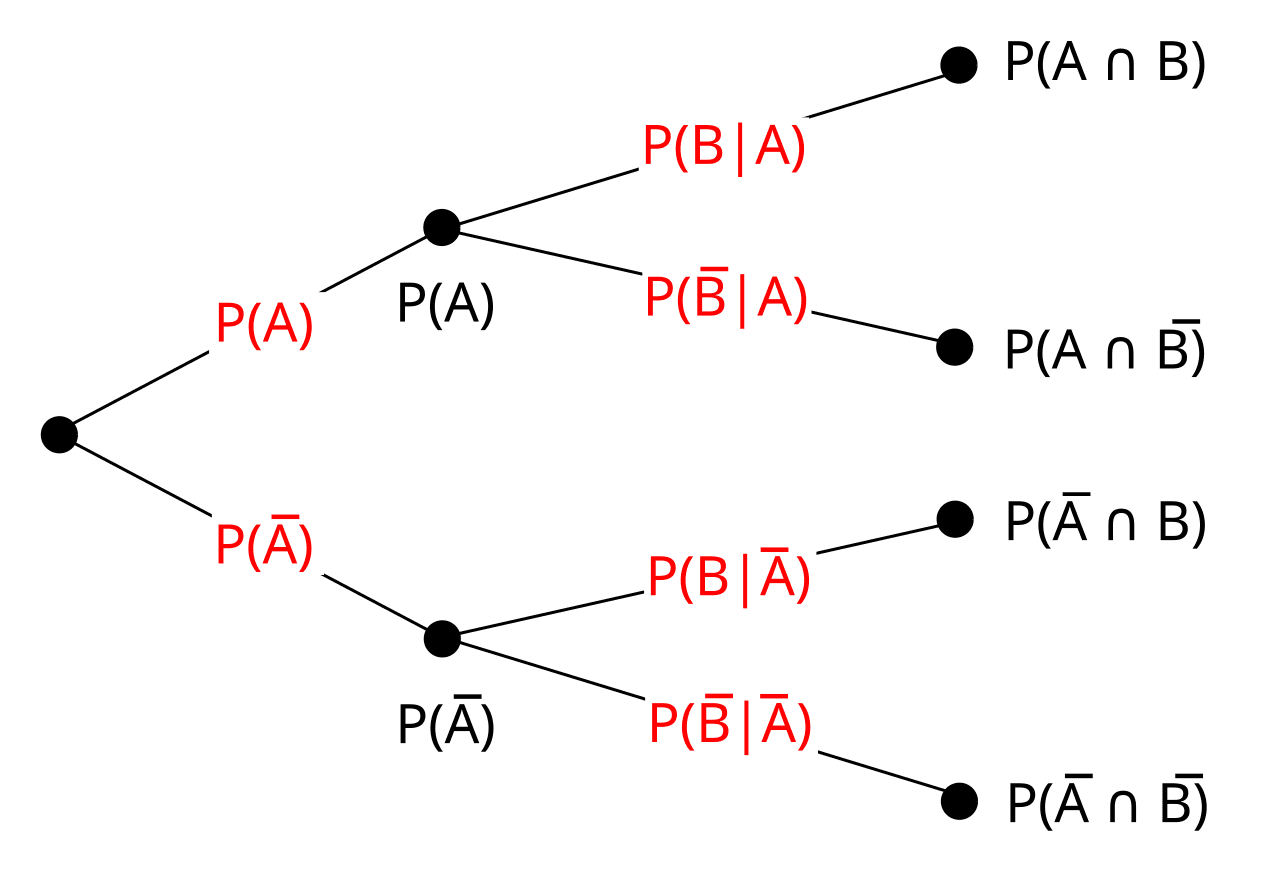

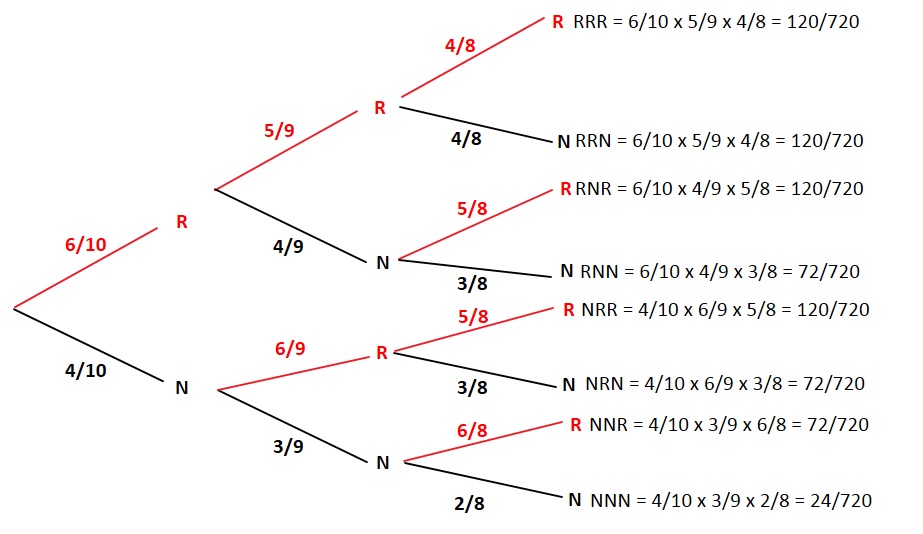

Dependent Events

Events are dependent if one event affects the probability of the other.

Formula:

P(A ∩ B) = P(A) × P(B|A)

Where:

P(B|A) = probability of B given that A occurred.

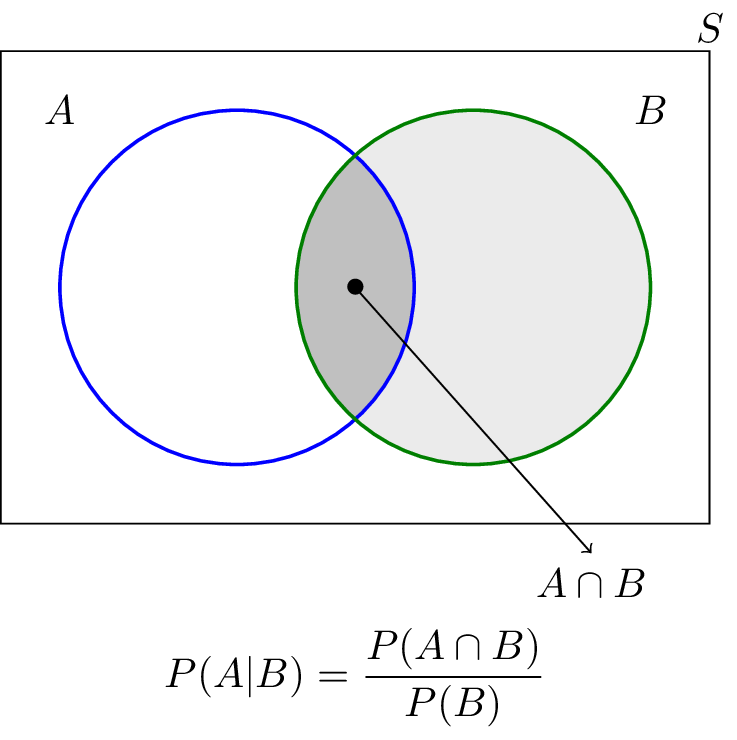

Conditional Probability

Conditional probability measures the probability of an event given that another event has already occurred.

Formula:

P(A|B) = P(A ∩ B) / P(B)

Example:

Suppose a card is drawn from a deck.

Event A: Card is a king

Event B: Card is a face card

P(A|B) = number of kings / number of face cards

= 4 / 12 = 1/3

Conditional probability is widely used in statistics, machine learning, and decision-making.

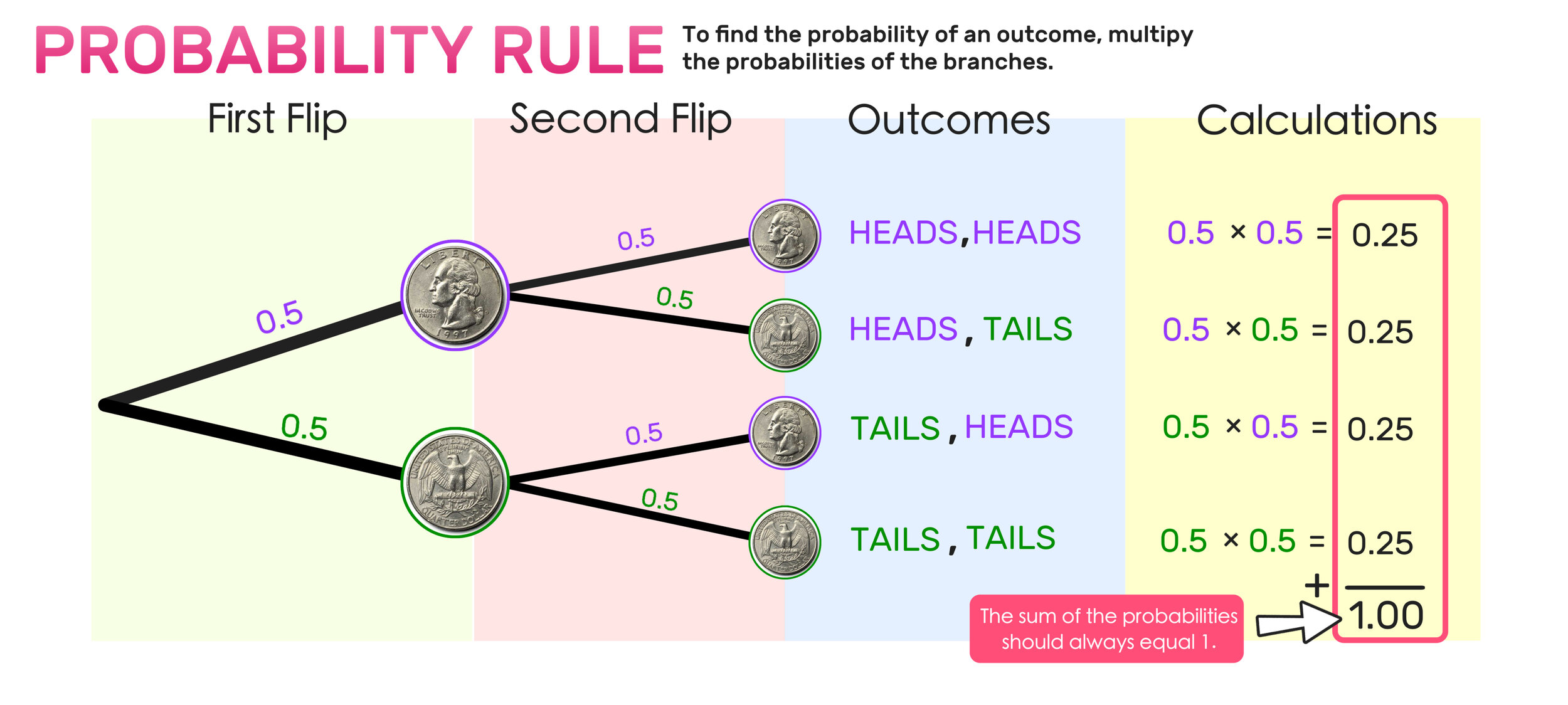

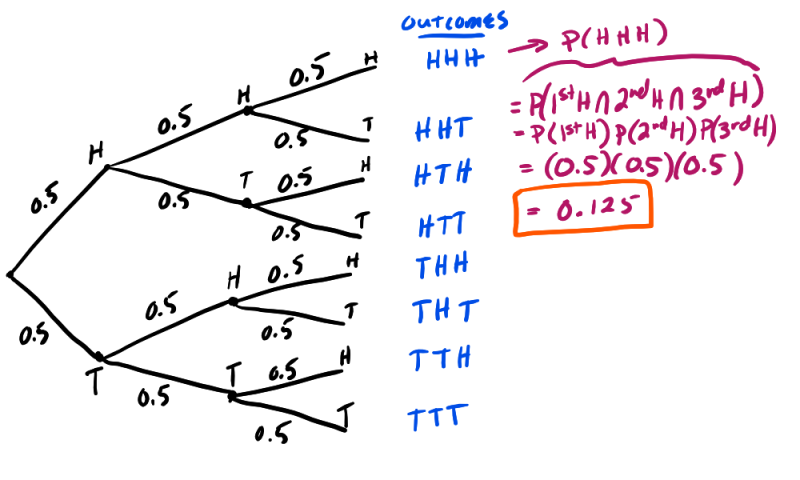

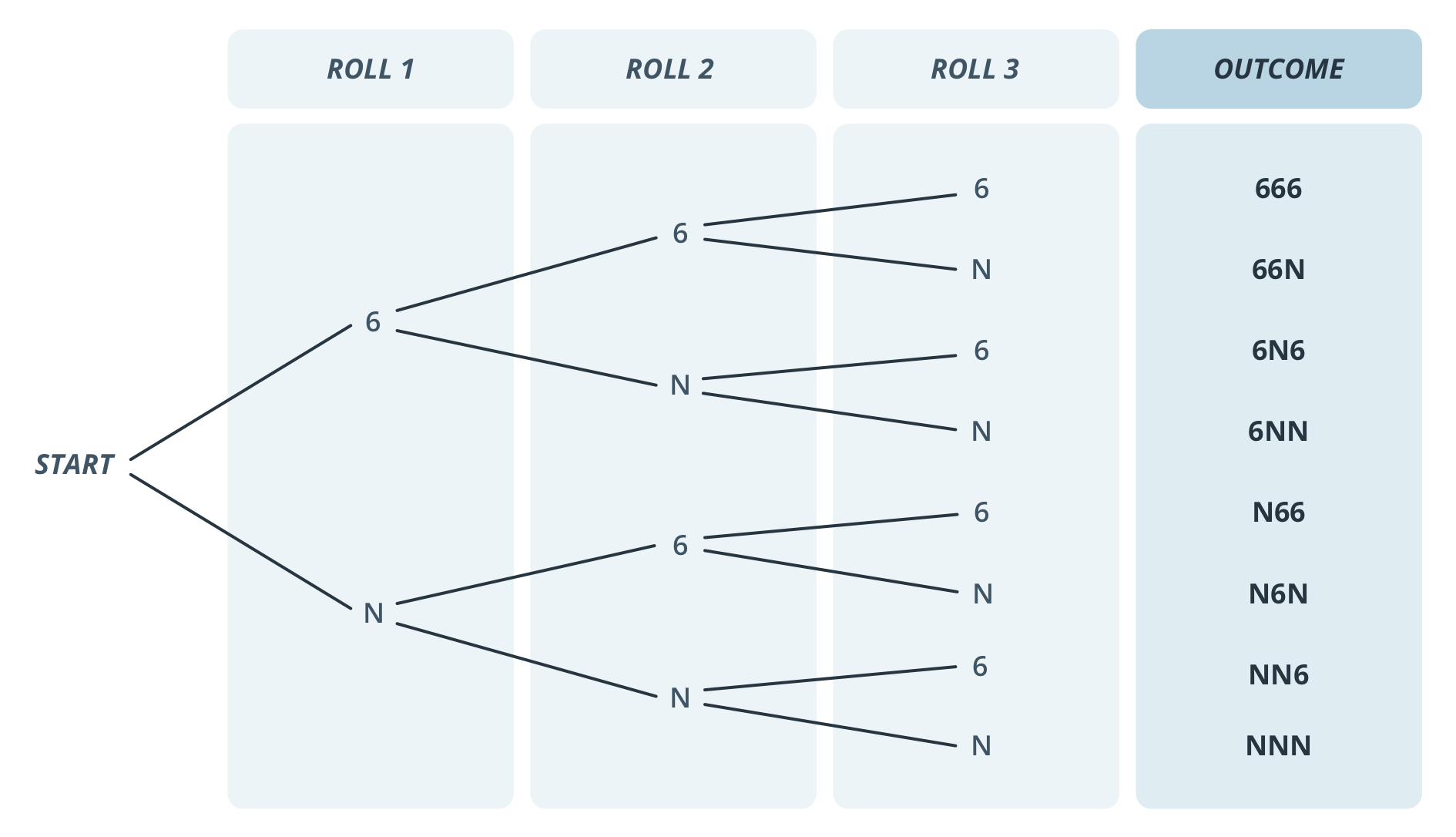

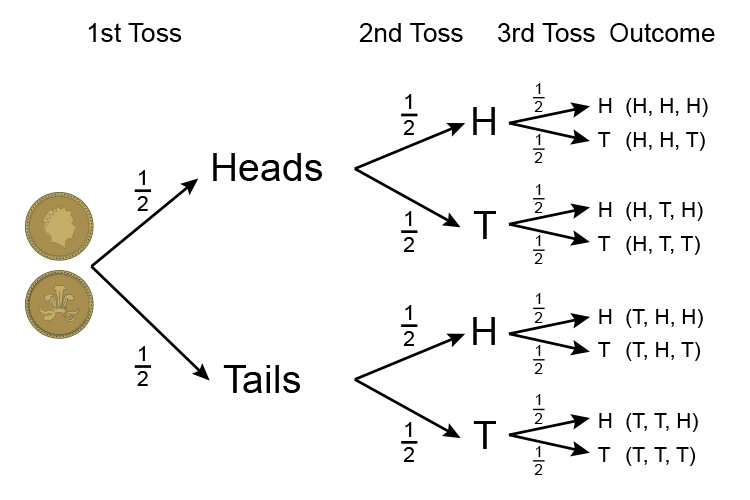

Probability Using Tree Diagrams

Tree diagrams provide a visual way to analyze probability experiments involving multiple stages.

Each branch represents a possible outcome.

Example:

Two coin tosses produce the outcomes:

HH, HT, TH, TT

Each outcome has probability:

1/4

Tree diagrams make probability calculations easier to understand.

Applications of Basic Probability Rules

Basic probability rules are used in many practical applications.

Weather Forecasting

Meteorologists use probability to predict weather conditions.

Insurance

Insurance companies estimate risks using probability.

Medical Diagnosis

Doctors use probability models to assess disease likelihood.

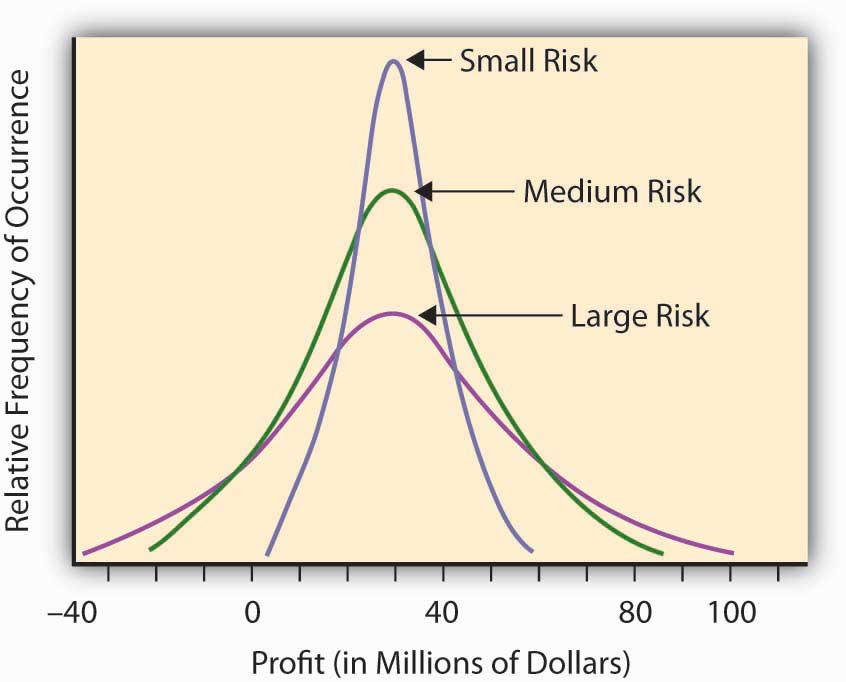

Finance

Investors analyze risk and return using probability theory.

Artificial Intelligence

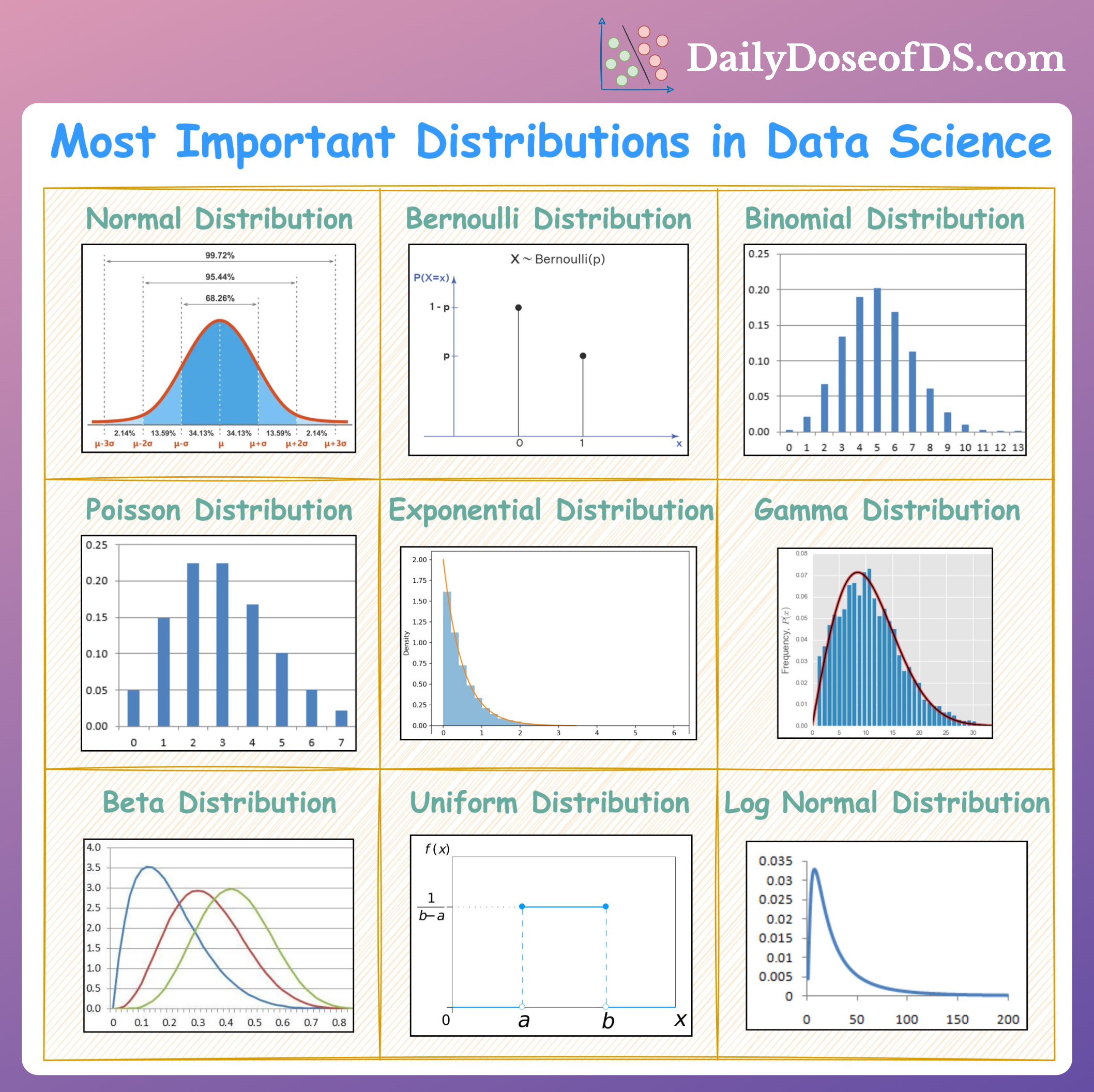

Machine learning algorithms rely on probability models.

These applications demonstrate the importance of probability in decision-making.

Importance of Basic Probability Rules

Basic probability rules provide a framework for analyzing uncertain events.

They help:

- calculate likelihood of outcomes

- analyze random experiments

- develop statistical models

- support decision making under uncertainty

Understanding these rules forms the foundation for advanced topics in probability theory and statistics.

Conclusion

Basic probability rules are essential principles used to analyze random events and calculate the likelihood of outcomes. These rules include the complement rule, addition rule, multiplication rule, and conditional probability rule.

By applying these rules, mathematicians and statisticians can solve complex probability problems and interpret uncertain situations effectively.

Probability theory plays a critical role in many fields including science, economics, engineering, data science, and artificial intelligence. Mastering the basic rules of probability helps students develop logical thinking and analytical skills needed for advanced statistical analysis.

Understanding probability not only improves mathematical knowledge but also helps individuals make better decisions in everyday life.